Make data quality everyone’s business

Enable real-time accessibility to data quality metrics throughout the entire organization, catering to both technical and non-technical users.

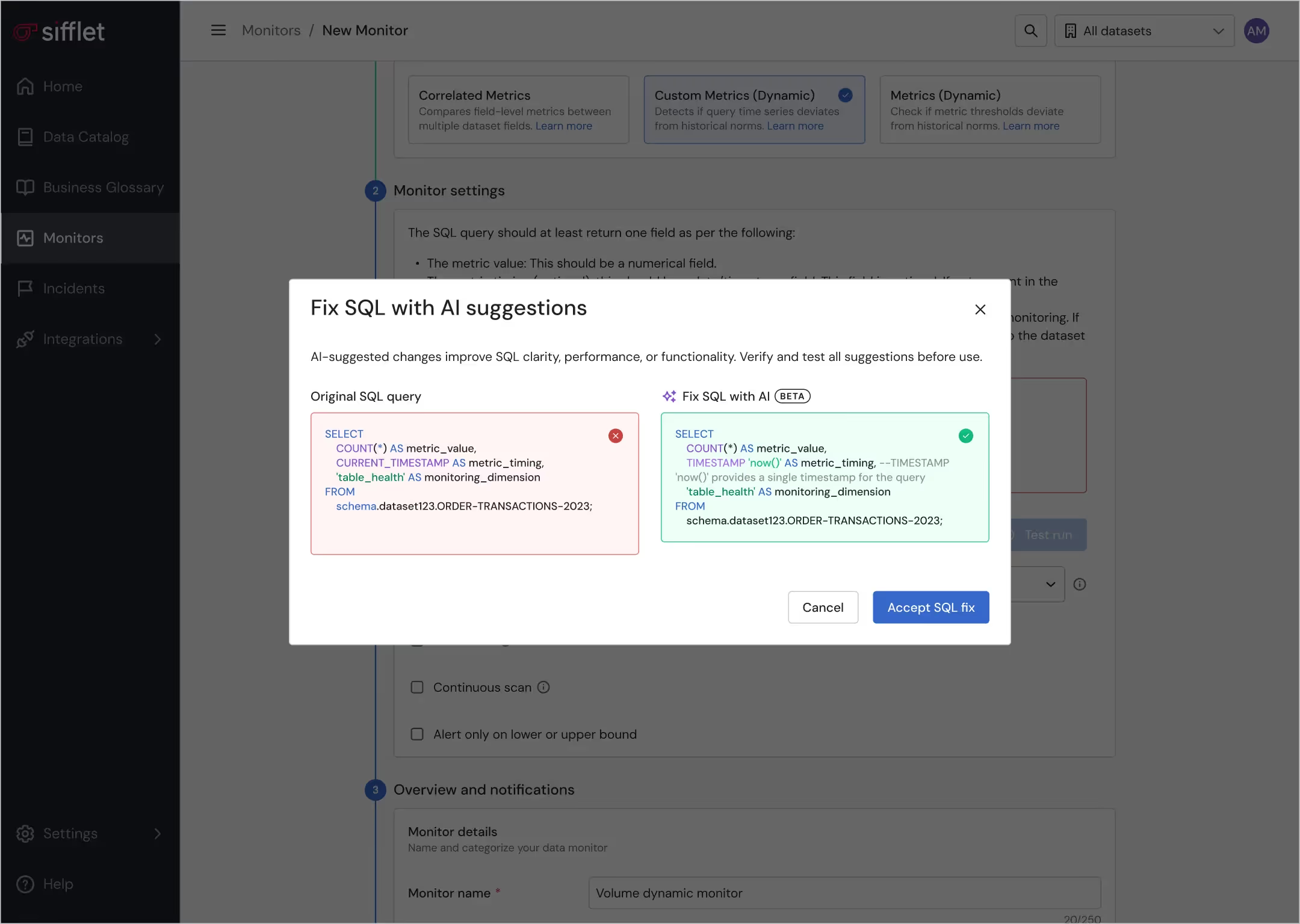

Streamlined monitoring experience

- Collect assets spanning the entire data lifecycle thanks to built-in integrations

- Enable non technical users to create business-informed monitors thanks to an intuitive UI and to the Sifflet AI Assistant

Improved information accessibility

- Access assets’ health status through the Data Catalog and lineage for de-risked data self-service

- Get notified of upstream incidents directly on BI tools via a browser extension

Still have a question in mind ?

Contact Us

Frequently asked questions

What kind of data quality monitoring features does Sifflet Insights offer?

Sifflet Insights offers features like real-time alerts, incident tracking, and access to metadata through your Data Catalog. These capabilities support proactive data quality monitoring and streamline root cause analysis when issues arise.

How does Sifflet enhance Apache Airflow for data teams?

Sifflet's integration with Apache Airflow brings powerful data observability features directly into your orchestration workflows. It helps data teams monitor DAG run statuses, understand downstream dependencies, and apply data quality monitoring to catch issues early, ensuring data reliability across the stack.

When should companies start implementing data quality monitoring tools?

Ideally, data quality monitoring should begin as early as possible in your data journey. As Dan Power shared during Entropy, fixing issues at the source is far more efficient than tracking down errors later. Early adoption of observability tools helps you proactively catch problems, reduce manual fixes, and improve overall data reliability from day one.

How does Sifflet help with data lineage tracking?

Sifflet offers detailed data lineage tracking at both the table and field level. You can easily trace data upstream and downstream, which helps avoid unexpected issues when making changes. This transparency is key for data governance and ensuring trust in your analytics pipeline.

What is data governance and why does it matter for modern businesses?

Data governance is a framework of policies, roles, and processes that ensure data is accurate, secure, and used responsibly across an organization. It brings clarity and accountability to data management, helping teams trust the data they use, stay compliant with regulations, and make confident decisions. When paired with data observability tools, governance ensures data remains reliable and actionable at scale.

Why is combining data catalogs with data observability tools the future of data management?

Combining data catalogs with data observability tools creates a holistic approach to managing data assets. While catalogs help users discover and understand data, observability tools ensure that data is accurate, timely, and reliable. This integration supports better decision-making, improves data reliability, and strengthens overall data governance.

How can Sifflet help prevent data disasters like the ones mentioned in the blog?

We built Sifflet to be your data stack's early warning system. Our observability platform offers automated data quality monitoring, anomaly detection, and root cause analysis, so you can identify and resolve issues before they impact your business. Whether you're scaling your pipelines or preparing for AI initiatives, we help you stay in control with confidence.

Can I trust the data I find in the Sifflet Data Catalog?

Absolutely! Thanks to Sifflet’s built-in data quality monitoring, you can view real-time metrics and health checks directly within the Data Catalog. This gives you confidence in the reliability of your data before making any decisions.

Get in touch CTA Section

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.

-p-500.png)