Shared Understanding. Ultimate Confidence. At Scale.

When everyone knows your data is systematically validated for quality, understands where it comes from and how it's transformed, and is aligned on freshness and SLAs, what’s not to trust?

Always Fresh. Always Validated.

No more explaining data discrepancies to the C-suite. Thanks to automatic and systematic validation, Sifflet ensures your data is always fresh and meets your quality requirements. Stakeholders know when data might be stale or interrupted, so they can make decisions with timely, accurate data.

- Automatically detect schema changes, null values, duplicates, or unexpected patterns that could comprise analysis.

- Set and monitor service-level agreements (SLAs) for critical data assets.

- Track when data was last updated and whether it meets freshness requirements

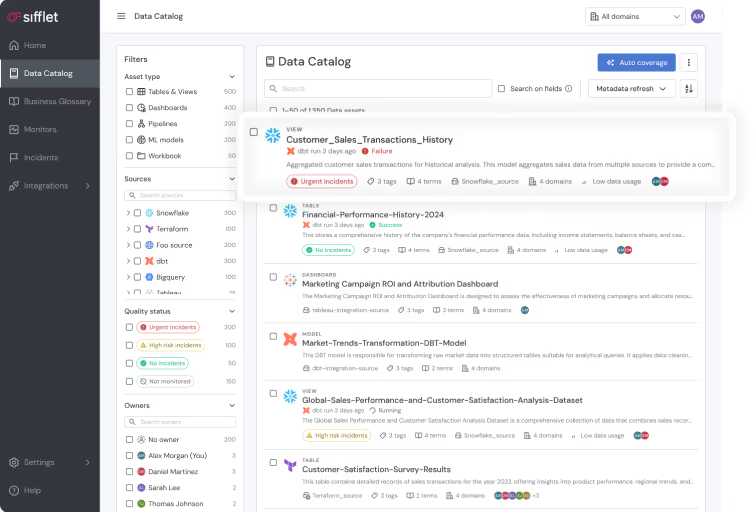

Understand Your Data, Inside and Out

Give data analysts and business users ultimate clarity. Sifflet helps teams understand their data across its whole lifecycle, and gives full context like business definitions, known limitations, and update frequencies, so everyone works from the same assumptions.

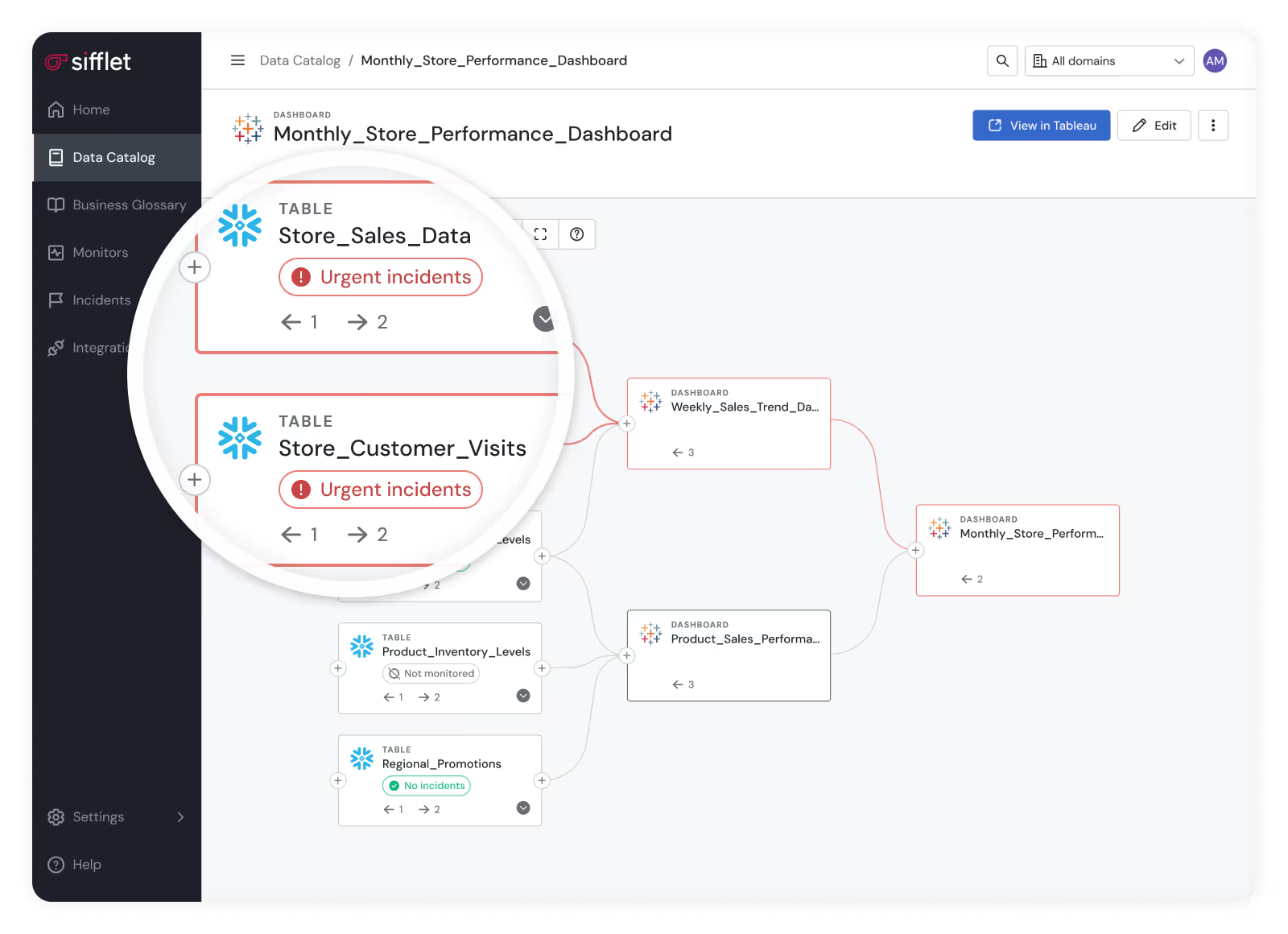

- Create transparency by helping users understand data pipelines, so they always know where data comes from and how it’s transformed.

- Develop shared understanding in data that prevents misinterpretation and builds confidence in analytics outputs.

- Quickly assess which downstream reports and dashboards are affected

Still have a question in mind ?

Contact Us

Frequently asked questions

What types of data lineage should I know about?

There are four main types: technical lineage, business lineage, cross-system lineage, and governance lineage. Each serves a different purpose, from debugging pipelines to supporting compliance. Tools like Sifflet offer field-level lineage for deeper insights, helping teams across engineering, analytics, and compliance understand and trust their data.

How does Sifflet ensure a user-friendly experience for data teams?

We prioritize user research and apply UX principles like Jacob’s Law to design familiar and intuitive workflows. This helps reduce friction for users working with tools like our Sifflet Insights plugin, which brings real-time metrics and data quality monitoring directly into BI dashboards like Looker and Tableau.

How does the updated lineage graph help with root cause analysis?

By merging dbt model nodes with dataset nodes, our streamlined lineage graph removes clutter and highlights what really matters. This cleaner view enhances root cause analysis by letting you quickly trace issues back to their source with fewer distractions and more context.

How can data observability help prevent missed SLAs and unreliable dashboards?

Data observability plays a key role in SLA compliance by detecting issues like ingestion latency, schema changes, or data drift before they impact downstream users. With proper data quality monitoring and real-time metrics, you can catch problems early and keep your dashboards and reports reliable.

How does Sifflet help reduce alert fatigue for data teams?

Sifflet uses AI-powered incident grouping to automatically consolidate related monitor failures into a single incident. By leveraging data lineage tracking and contextual analysis, teams can identify root causes faster and focus on what matters. This approach significantly reduces alert fatigue and improves trust in monitoring systems.

How does data observability fit into a modern data platform?

Data observability is a critical layer of a modern data platform. It helps monitor pipeline health, detect anomalies, and ensure data quality across your stack. With observability tools like Sifflet, teams can catch issues early, perform root cause analysis, and maintain trust in their analytics and reporting.

How does schema evolution impact batch and streaming data observability?

Schema evolution can introduce unexpected fields or data type changes that disrupt both batch and streaming data workflows. With proper data pipeline monitoring and observability tools, you can track these changes in real time and ensure your systems adapt without losing data quality or breaking downstream processes.

What are some signs that our organization might need better data observability?

If your team struggles with delayed dashboards, inconsistent metrics, or unclear data lineage, it's likely time to invest in a data observability solution. At Sifflet, we even created a simple diagnostic to help you assess your data temperature. Whether you're in a 'slow burn' or a 'five alarm fire' state, we can help you improve data reliability and pipeline health.

-p-500.png)