Big Data. %%Big Potential.%%

Sell data products that meet the most demanding standards of data reliability, quality and health.

Identify Opportunities

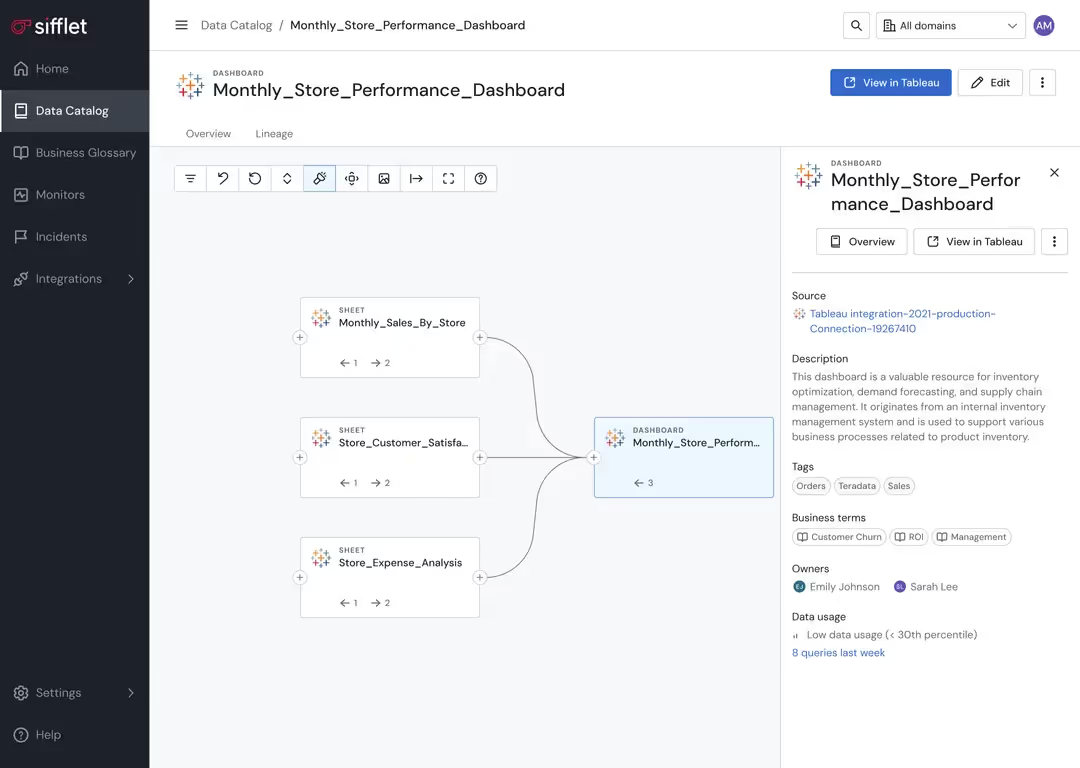

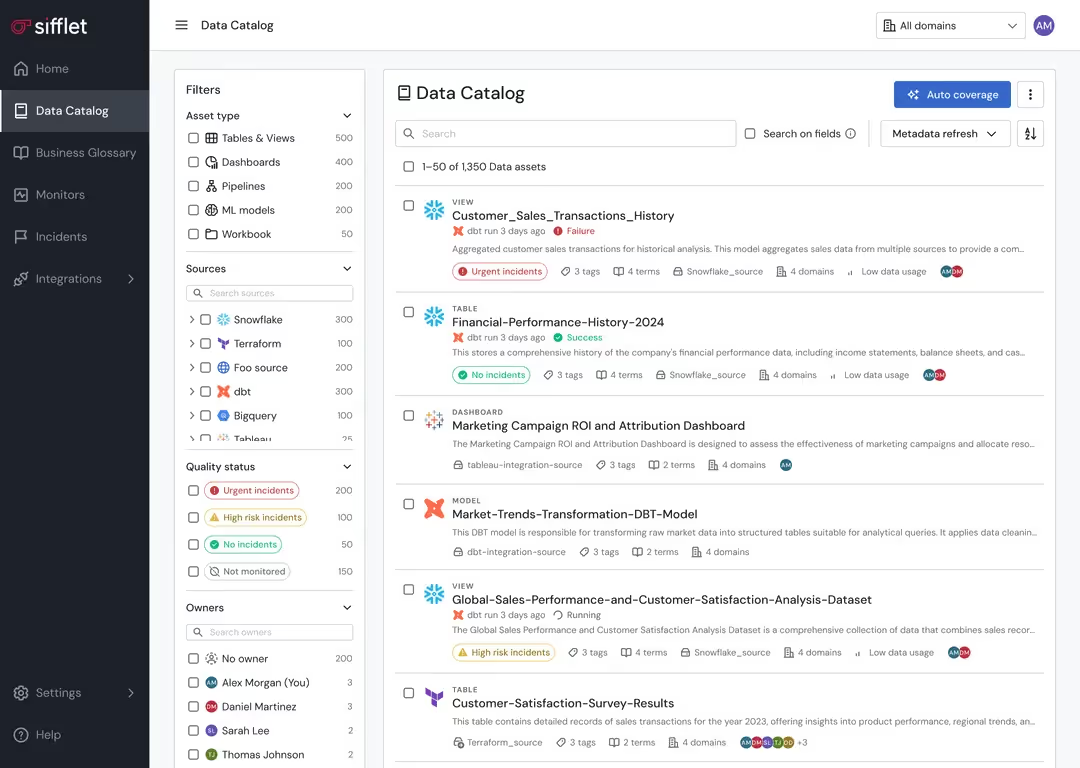

Monetizing data starts with identifying your highest potential data sets. Sifflet can highlight patterns in data usage and quality that suggest monetization potential and help you uncover data combinations that could create value.

- Deep dive into patterns around data usage to identify high-value data sets through usage analytics

- Determine which data assets are most reliable and complete

Ensure Quality and Operational Excellence

It’s not enough to create a data product. Revenue depends on ensuring the highest levels of reliability and quality. Sifflet ensures quality and operational excellence to protect your revenue streams.

- Reduce the cost of maintaining your data products through automated monitoring

- Prevent and detect data quality issues before customers are impacted

- Empower rapid response to issues that could affect data product value

- Streamline data delivery and sharing processes

Still have a question in mind ?

Contact Us

Frequently asked questions

What role does data quality monitoring play in a data catalog?

Data quality monitoring ensures your data is accurate, complete, and consistent. A good data catalog should include profiling and validation tools that help teams assess data quality, which is crucial for maintaining SLA compliance and enabling proactive monitoring.

Is this integration helpful for teams focused on data reliability and governance?

Yes, definitely! The Sifflet and Firebolt integration supports strong data governance and boosts data reliability by enabling data profiling, schema monitoring, and automated validation rules. This ensures your data remains trustworthy and compliant.

What makes Sifflet a strong alternative to Metaplane for enterprise data teams?

Sifflet stands out as a Metaplane alternative because it offers full-stack data observability with field-level lineage, automated root cause analysis, and business context built into every alert. Its AI-powered agents help reduce alert fatigue and guide remediation, making it ideal for complex, fast-scaling environments where data reliability is crucial.

Why is metadata observability so important in an Open Data Stack?

In an Open Data Stack, metadata acts as the new control plane, guiding how different engines interpret and interact with your data. Without active metadata observability, you're at risk of schema drift, catalog mismatches, and invisible data errors. Sifflet helps you stay ahead by continuously monitoring metadata changes and ensuring data reliability across your stack.

How can I detect silent failures in my data pipelines before they cause damage?

Silent failures are tricky, but with the right data observability tools, you can catch them early. Look for platforms that support real-time alerts, schema registry integration, and dynamic thresholding. These features help you monitor for unexpected changes, missing data, or drift in your pipelines. Sifflet, for example, offers anomaly detection and root cause analysis that help you uncover and fix issues before they impact your business.

What practical steps can companies take to build a data-driven culture?

To build a data-driven culture, start by investing in data literacy, aligning goals across teams, and adopting observability tools that support proactive monitoring. Platforms with features like metrics collection, telemetry instrumentation, and real-time alerts can help ensure data reliability and build trust in your analytics.

How can enterprise data teams benefit from implementing a data observability platform?

Great question! A data observability platform helps enterprise teams monitor data quality, detect anomalies in real time, and reduce incident response time. This leads to better decision-making, improved SLA compliance, and optimized cloud costs. Companies like Etam and Nextbite have seen major improvements in reliability and efficiency after adopting observability tools.

What improvements has Sifflet made to incident management workflows?

We’ve introduced Augmented Resolution to help teams group related alerts into a single collaborative ticket, streamlining incident response. Plus, with integrations into your ticketing systems, Sifflet ensures that data issues are tracked, communicated, and resolved efficiently. It’s all part of our mission to boost data reliability and support your operational intelligence.

-p-500.png)