Proactive access, quality and control

Empower data teams to detect and address issues proactively by providing them with tools to ensure data availability, usability, integrity, and security.

De-risked data discovery

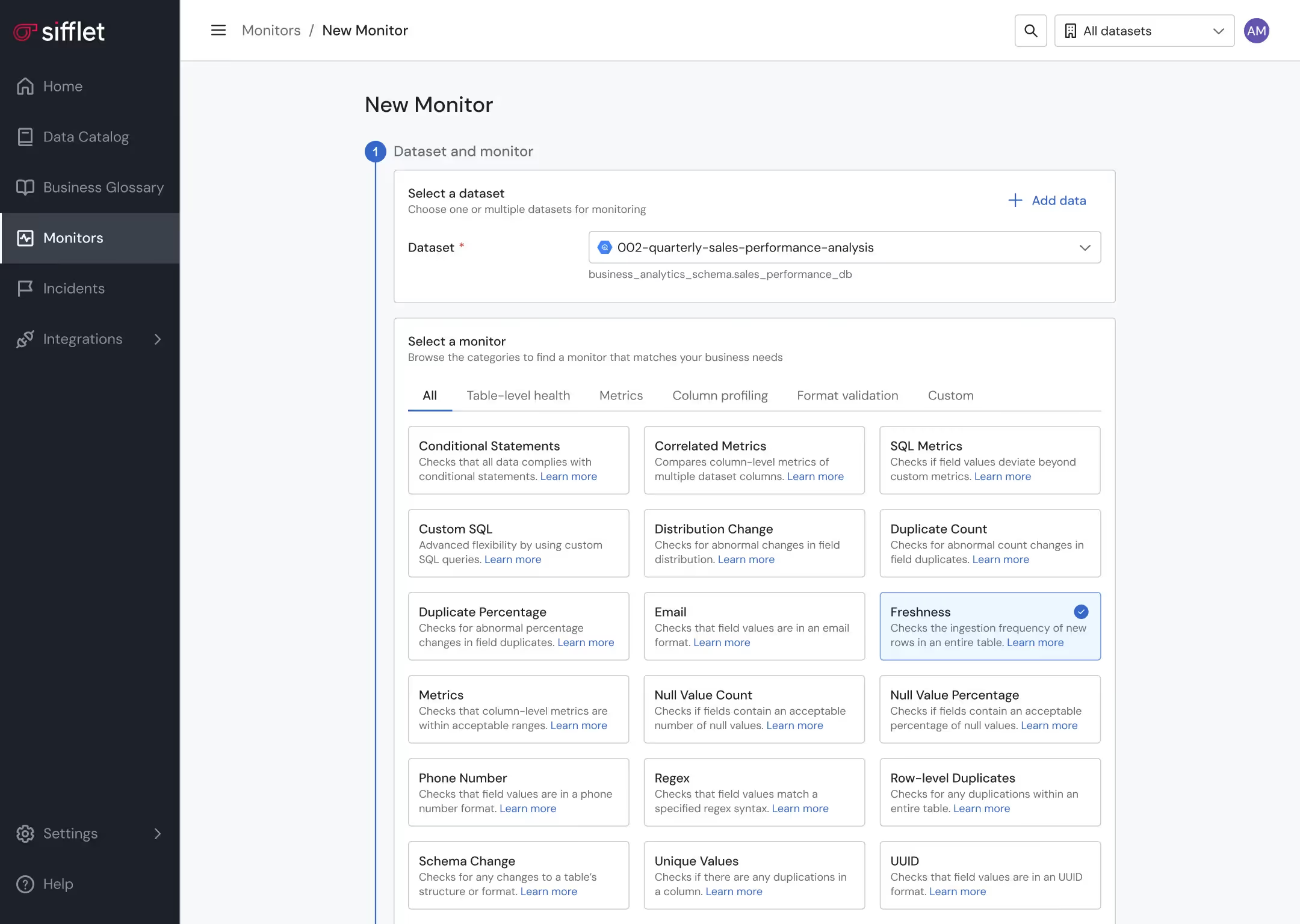

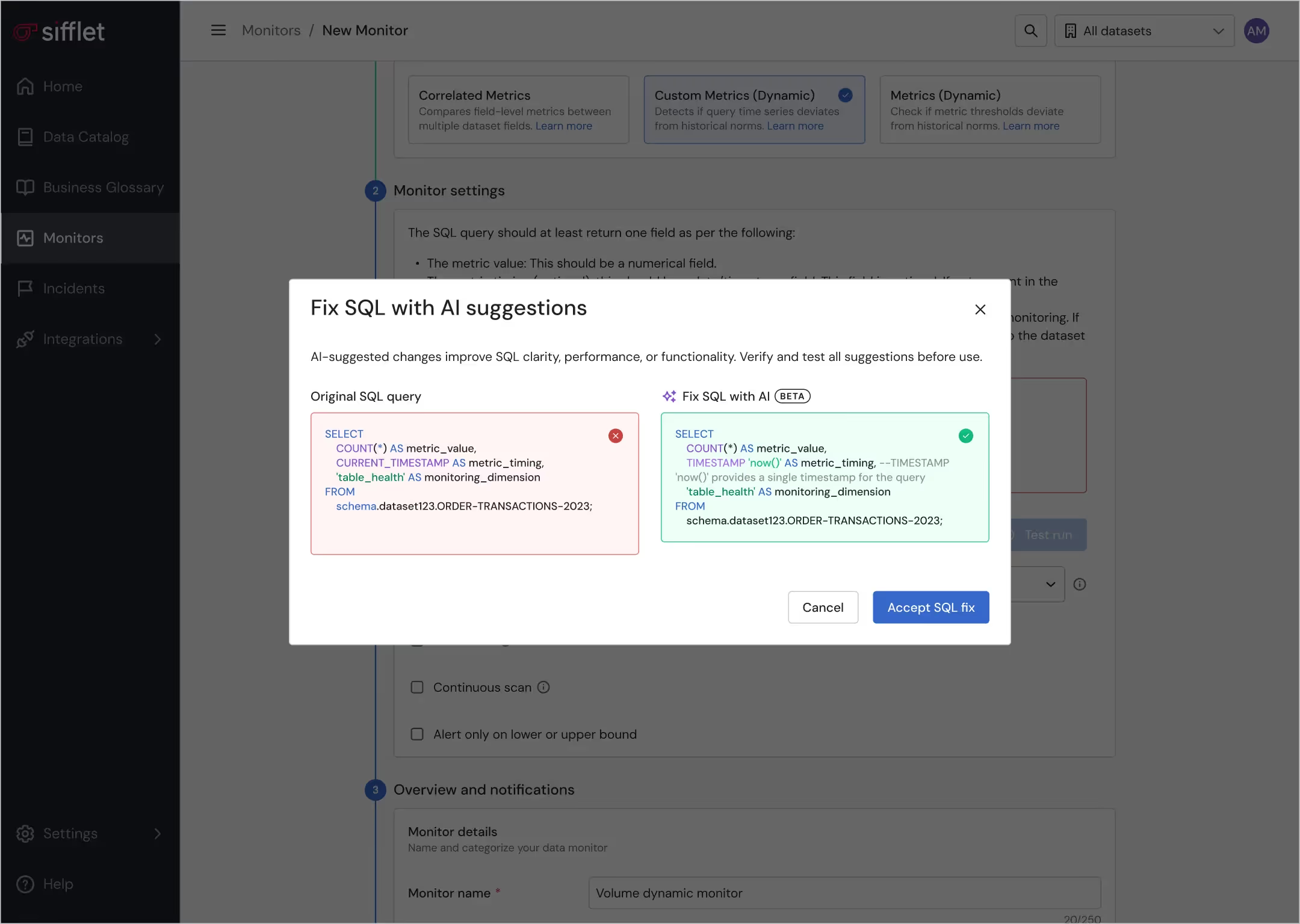

- Ensure proactive data quality thanks to a large library of OOTB monitors and a built-in notification system

- Gain visibility over assets’ documentation and health status on the Data Catalog for safe data discovery

- Establish the official source of truth for key business concepts using the Business Glossary

- Leverage custom tagging to classify assets

Structured data observability platform

- Tailor data visibility for teams by grouping assets in domains that align with the company’s structure

- Define data ownership to improve accountability and smooth collaboration across teams

Secured data management

Safeguard PII data securely through ML-based PII detection

Still have a question in mind ?

Contact Us

Frequently asked questions

What is the difference between data monitoring and data observability?

Great question! Data monitoring is like your car's dashboard—it alerts you when something goes wrong, like a failed pipeline or a missing dataset. Data observability, on the other hand, is like being the driver. It gives you a full understanding of how your data behaves, where it comes from, and how issues impact downstream systems. At Sifflet, we believe in going beyond alerts to deliver true data observability across your entire stack.

What kind of alerts can I expect from Sifflet when using it with Firebolt?

With Sifflet, you’ll receive real-time alerts for any data quality issues detected in your Firebolt warehouse. These alerts are powered by advanced anomaly detection and data freshness checks, helping you stay ahead of potential problems.

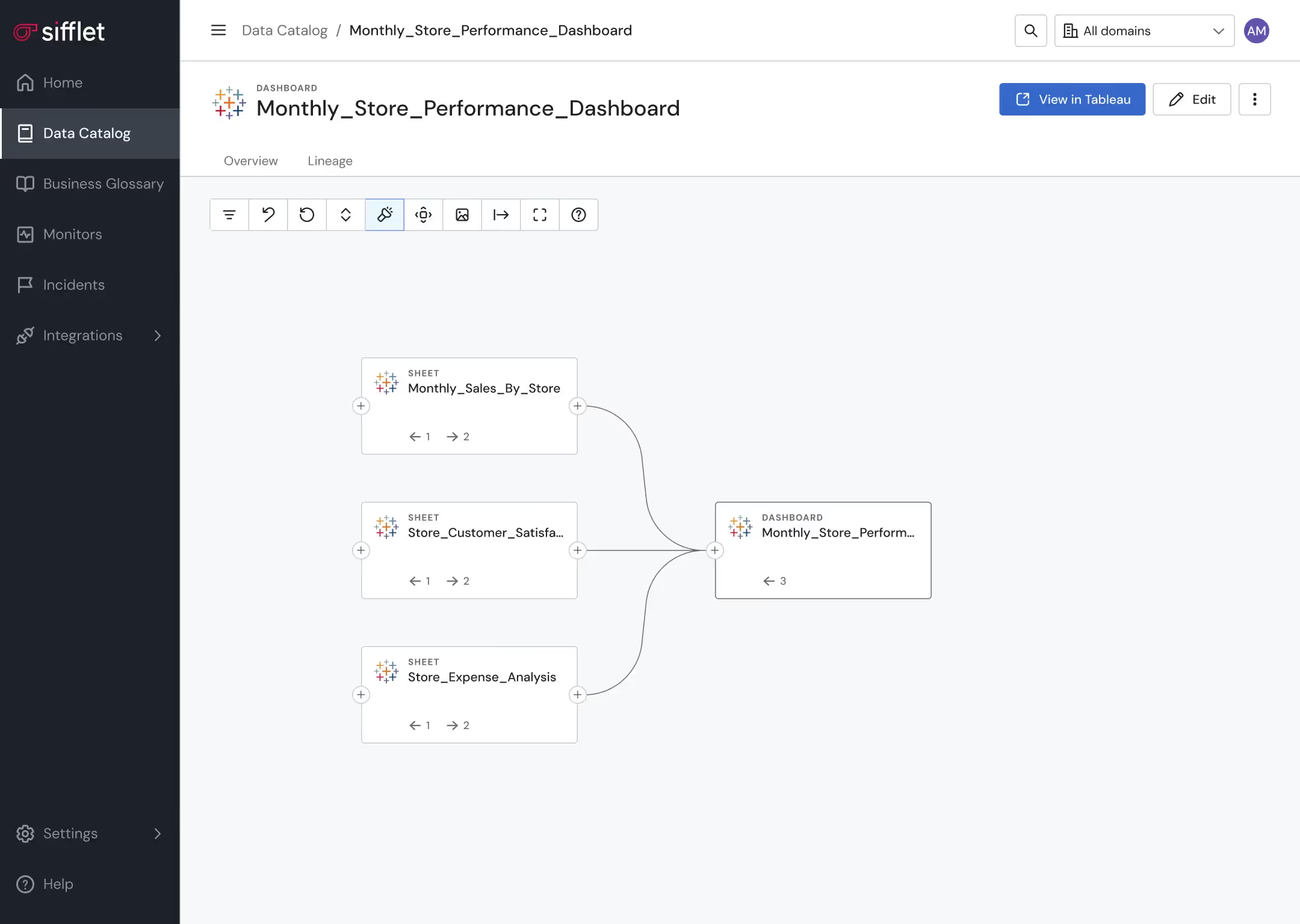

How can data lineage tracking help with root cause analysis?

Data lineage tracking shows how data flows through your systems and how different assets depend on each other. This is incredibly helpful for root cause analysis because it lets you trace issues back to their source quickly. With Sifflet’s lineage capabilities, you can understand both upstream and downstream impacts of a data incident, making it easier to resolve problems and prevent future ones.

How does Sifflet help scale dbt environments without compromising data quality?

Great question! Sifflet enhances your dbt environment by adding a robust data observability layer that enforces standards, monitors key metrics, and ensures data quality monitoring across thousands of models. With centralized metadata, automated monitors, and lineage tracking, Sifflet helps teams avoid the usual pitfalls of scaling like ownership ambiguity and technical debt.

How does Sifflet help detect and prevent data drift in AI models?

Sifflet is designed to monitor subtle changes in data distributions, which is key for data drift detection. This helps teams catch shifts in data that could negatively impact AI model performance. By continuously analyzing incoming data and comparing it to historical patterns, Sifflet ensures your models stay aligned with the most relevant and reliable inputs.

How does Sifflet help with end-to-end data observability?

Sifflet enhances end-to-end data observability by allowing you to declare any asset in your data stack, including custom applications and scripts. This ensures full visibility into your data pipelines and supports comprehensive data lineage tracking and root cause analysis.

How does Sifflet’s Freshness Monitor scale across large data environments?

Sifflet’s Freshness Monitor is designed to scale effortlessly. Thanks to our dynamic monitoring mode and continuous scan feature, you can monitor thousands of data assets without manually setting schedules. It’s a smart way to implement data pipeline monitoring across distributed systems and ensure SLA compliance at scale.

How does Sifflet's Data Sharing feature help with enforcing data governance policies?

Great question! Sifflet's Data Sharing provides access to rich metadata about your data assets, including tags, owners, and monitor configurations. By making this available in your own data warehouse, you can set up automated checks to ensure compliance with your governance standards. It's a powerful way to implement scalable data governance and reduce manual audits using our observability platform.

-p-500.png)