Cost-efficient data pipelines

Pinpoint cost inefficiencies and anomalies thanks to full-stack data observability.

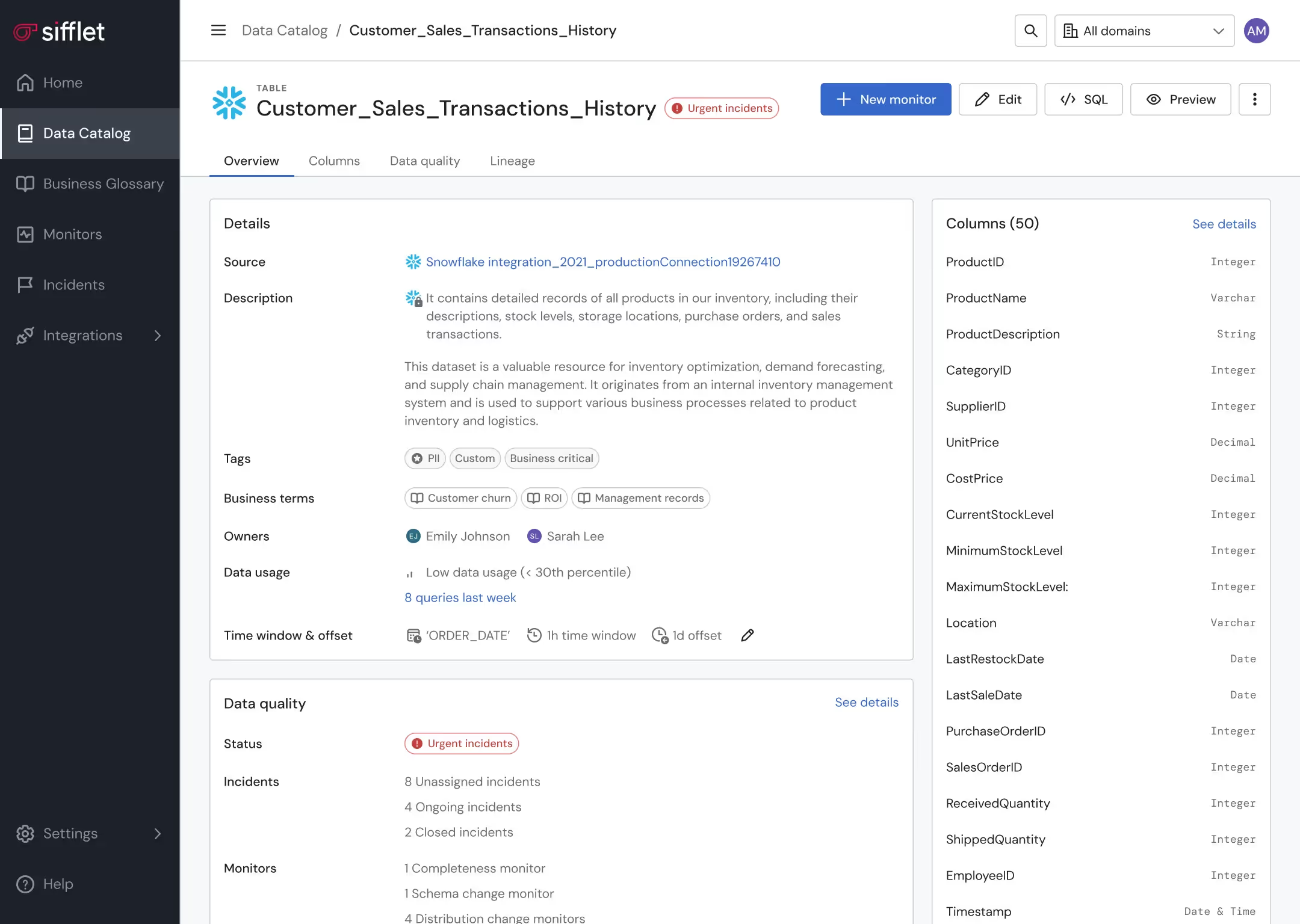

Data asset optimization

- Leverage lineage and Data Catalog to pinpoint underutilized assets

- Get alerted on unexpected behaviors in data consumption patterns

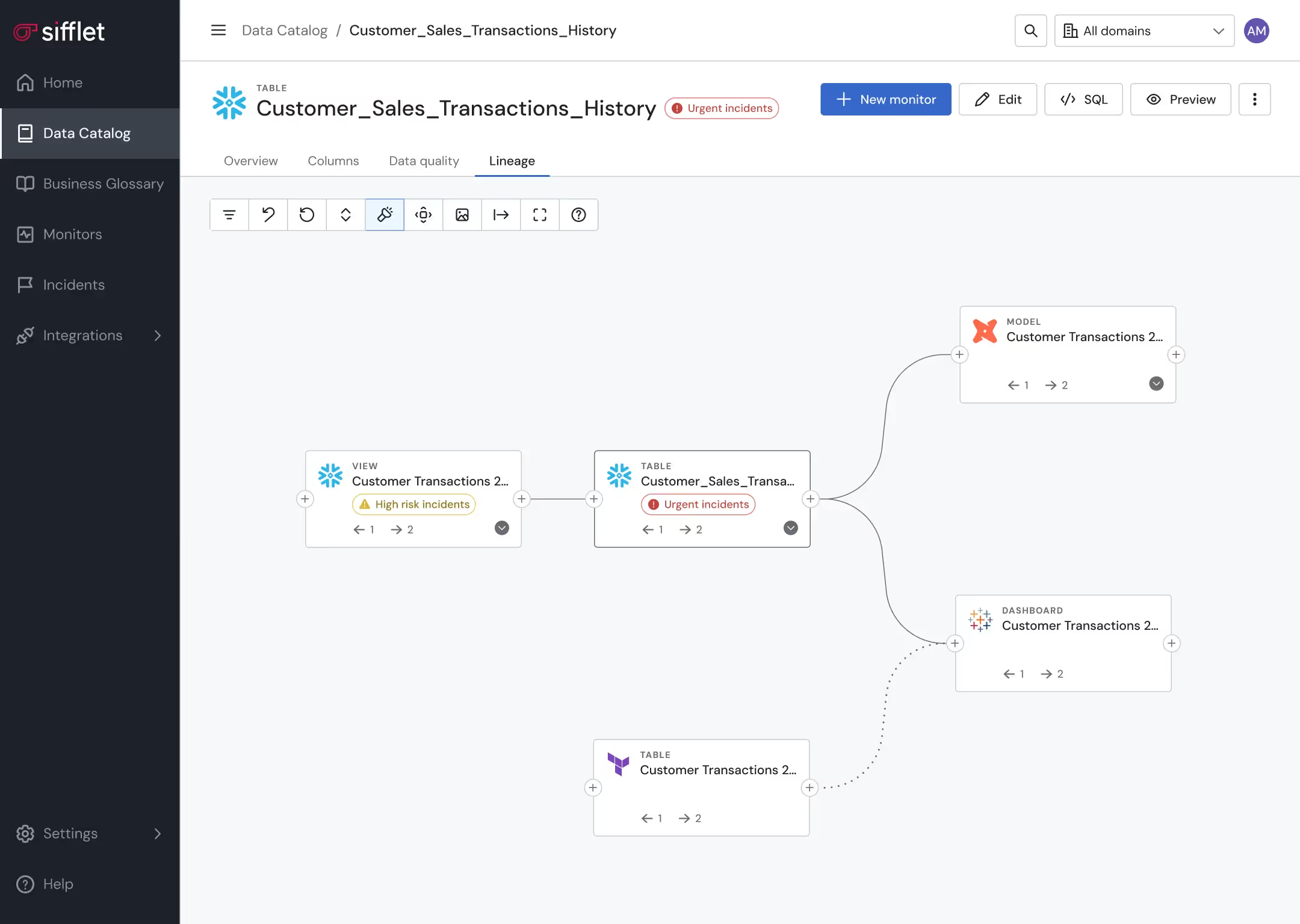

Proactive data pipeline management

Proactively prevent pipelines from running in case a data quality anomaly is detected

Still have a question in mind ?

Contact Us

Frequently asked questions

How can I measure whether my data is trustworthy?

Great question! To measure data quality, you can track key metrics like accuracy, completeness, consistency, relevance, and freshness. These indicators help you evaluate the health of your data and are often part of a broader data observability strategy that ensures your data is reliable and ready for business use.

What is SQL Table Tracer and how does it help with data observability?

SQL Table Tracer (STT) is a lightweight library that extracts table-level lineage from SQL queries. It plays a key role in data observability by identifying upstream and downstream tables, making it easier to understand data dependencies and track changes across your data pipelines.

How does data observability help control cloud costs?

Data observability shines a light on hidden inefficiencies like redundant queries or unused pipelines. By using observability to track resource utilization and detect anomalies in compute usage, one financial services firm cut their Snowflake spend by 40%. It turns cloud cost management from guesswork into a data-driven process.

Can data observability improve collaboration across data teams?

Absolutely! With shared visibility into data flows and transformations, observability platforms foster better communication between data engineers, analysts, and business users. Everyone can see what's happening in the pipeline, which encourages ownership and teamwork around data reliability.

How does reverse ETL improve data reliability and reduce manual data requests?

Reverse ETL automates the syncing of data from your warehouse to business apps, helping reduce the number of manual data requests across teams. This improves data reliability by ensuring consistent, up-to-date information is available where it’s needed most, while also supporting SLA compliance and data automation efforts.

What’s new in Sifflet’s data quality monitoring capabilities?

We’ve rolled out several powerful updates to help you monitor data quality more effectively. One highlight is our new referential integrity monitor, which ensures logical consistency between tables, like verifying that every order has a valid customer ID. We’ve also enhanced our Data Quality as Code framework, making it easier to scale monitor creation with templates and for-loops.

Why is data lineage tracking important for governance in a hybrid architecture?

Data lineage tracking provides transparency into how data moves and transforms across systems. In hybrid architectures, it helps enforce governance by showing where data comes from, who owns it, and how changes impact downstream consumers, making compliance and audit logging much easier.

What makes Sifflet a strong alternative to Anomalo for data observability?

Sifflet offers end-to-end data observability that goes beyond anomaly detection. It monitors data pipelines, tracks field-level data lineage, and provides full context around incidents. With AI agents and real-time metrics, Sifflet helps teams understand root causes and business impact, not just surface-level issues.

-p-500.png)