Coverage without compromise.

Grow monitoring coverage intelligently as your stack scales and do more with less resources thanks to tooling that reduces maintenance burden, improves signal-to-noise, and helps you understand impact across interconnected systems.

Don’t Let Scale Stop You

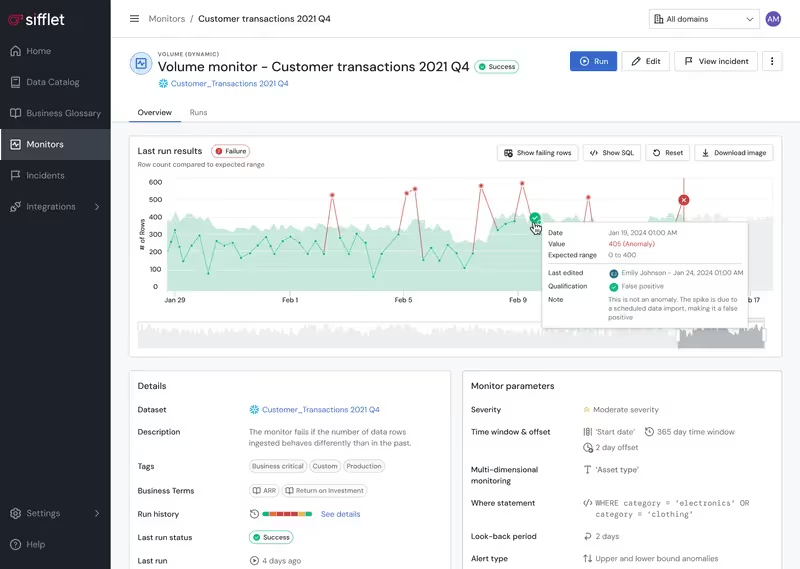

As your stack and data assets scale, so do monitors. Keeping rules updated becomes a full-time job, and tribal knowledge about monitors gets scattered, so teams struggle to sunset obsolete monitors while adding new ones. No more with Sifflet.

- Optimize monitoring coverage and minimize noise levels with AI-powered suggestions and supervision that adapt dynamically

- Implement programmatic monitoring set up and maintenance with Data Quality as Code (DQaC)

- Automated monitor creation and updates based on data changes

- Centralized monitor management reduces maintenance overhead

Get Clear and Consistent

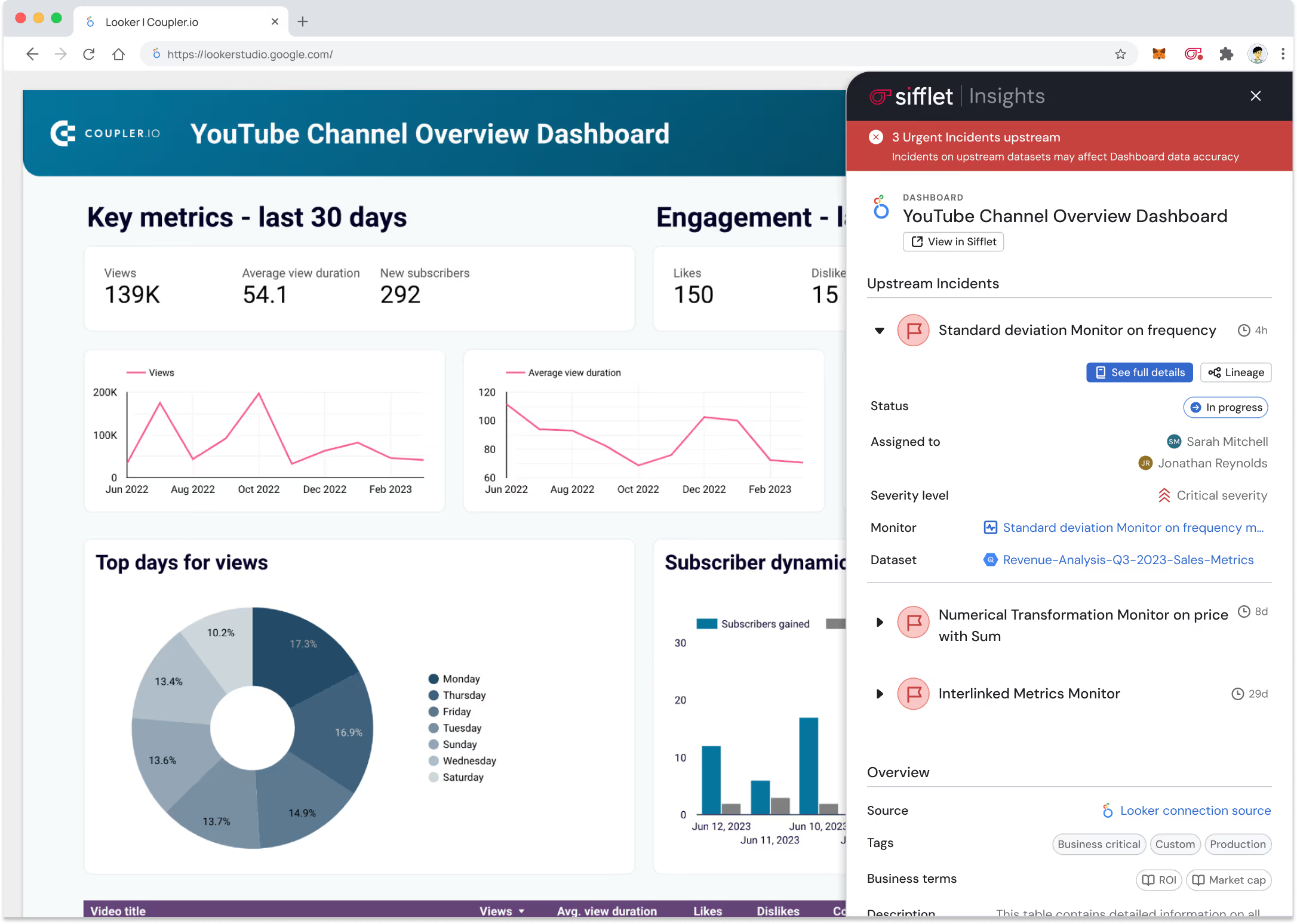

Maintaining consistent monitoring practices across tools, platforms, and internal teams that work across different parts of the stack isn’t easy. Sifflet makes it a breeze.

- Set up consistent alerting and response workflows

- Benefit from unified monitoring across your platforms and tools

- Use automated dependency mapping to show system relationships and benefit from end-to-end visibility across the entire data pipeline

Still have a question in mind ?

Contact Us

Frequently asked questions

What future observability goals has Carrefour set?

Looking ahead, Carrefour plans to expand monitoring to more than 1,500 tables, integrate AI-driven anomaly detection, and implement data contracts and SLA monitoring to further strengthen data governance and accountability.

Why is using WHERE instead of HAVING so important for performance?

Using WHERE instead of HAVING when not working with GROUP BY clauses is crucial because WHERE filters data earlier in the query execution. This reduces the amount of data processed, which improves query speed and supports better metrics collection in your observability platform.

How is Sifflet using AI to improve data observability?

We're leveraging AI to make data observability smarter and more efficient. Our AI agent automates monitor creation and provides actionable insights for anomaly detection and root cause analysis. It's all about reducing manual effort while boosting data reliability at scale.

Is Sifflet's Data Sharing compatible with cloud data platforms like Snowflake or BigQuery?

Yes, it is! Sifflet currently supports Data Sharing to Snowflake, BigQuery, and S3, with more destinations on the way. This makes it easy to integrate Sifflet into your cloud data observability strategy and leverage your existing infrastructure for deeper insights and proactive monitoring.

Will dbt Impact Analysis be available for other version control tools?

Yes! While it currently supports GitHub and GitLab, Sifflet is actively working on bringing dbt Impact Analysis to Bitbucket. This expansion ensures broader coverage and supports more teams in achieving better data governance and observability.

How does Sifflet help with monitoring data distribution?

Sifflet makes distribution monitoring easy by using statistical profiling to learn what 'normal' looks like in your data. It then alerts you when patterns drift from those baselines. This helps you maintain SLA compliance and avoid surprises in dashboards or ML models. Plus, it's all automated within our data observability platform so you can focus on solving problems, not just finding them.

How can business teams benefit from using Sifflet Insights?

Business teams can access data quality insights directly within their BI dashboards, reducing their reliance on data engineers. This democratizes data observability and empowers teams to make confident, data-driven decisions with full transparency into data lineage and reliability.

How does Sifflet’s observability platform help reduce alert fatigue?

We hear this a lot — too many alerts, not enough clarity. At Sifflet, we focus on intelligent alerting by combining metadata, data lineage tracking, and usage patterns to prioritize what really matters. Instead of just flagging that something broke, our platform tells you who’s affected, why it matters, and how to fix it. That means fewer false positives and more actionable insights, helping you cut through the noise and focus on what truly impacts your business.

-p-500.png)