Google BigQuery

Integrate Sifflet with BigQuery to monitor all table types, access field-level lineage, enrich metadata, and gain actionable insights for an optimized data observability strategy.

Metadata-based monitors and optimized queries

Sifflet leverages BigQuery's metadata APIs and relies on optimized queries, ensuring minimal costs and efficient monitor runs.

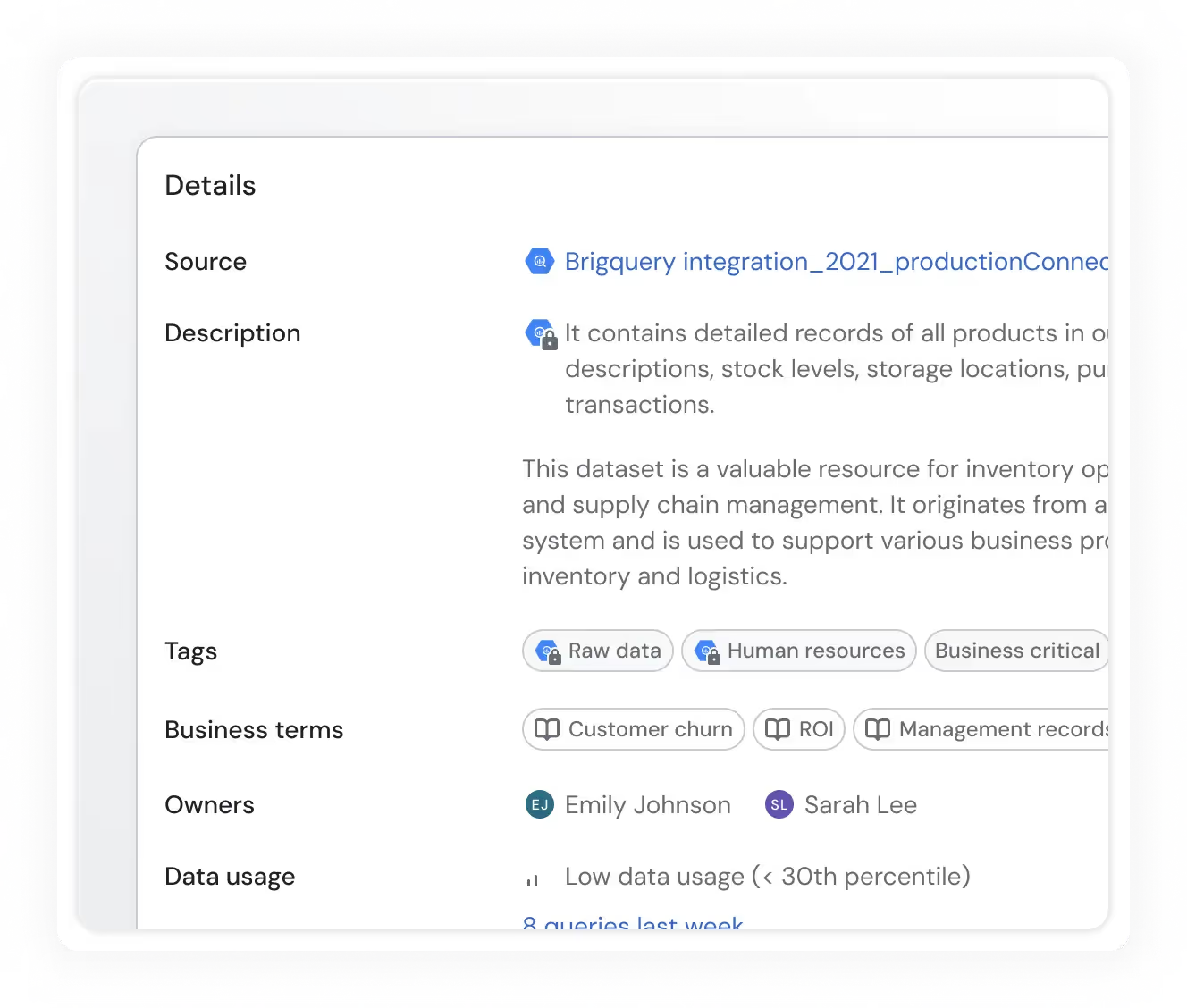

Usage and BigQuery metadata

Get detailed statistics about the usage of your BigQuery assets, in addition to various metadata (like tags, descriptions, and table sizes) retrieved directly from BigQuery.

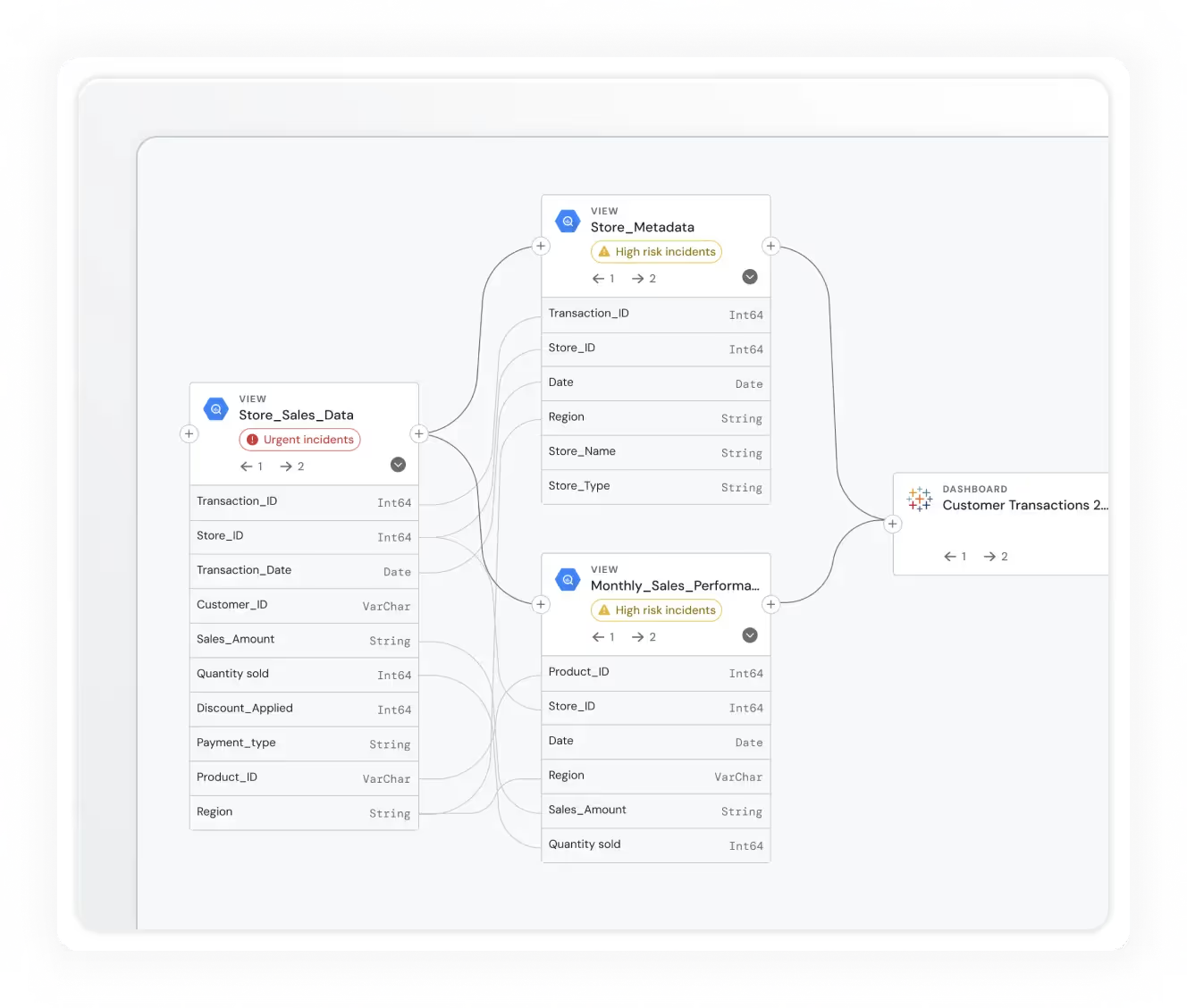

Field-level lineage

Have a complete understanding of how data flows through your platform via field-level end-to-end lineage for BigQuery.

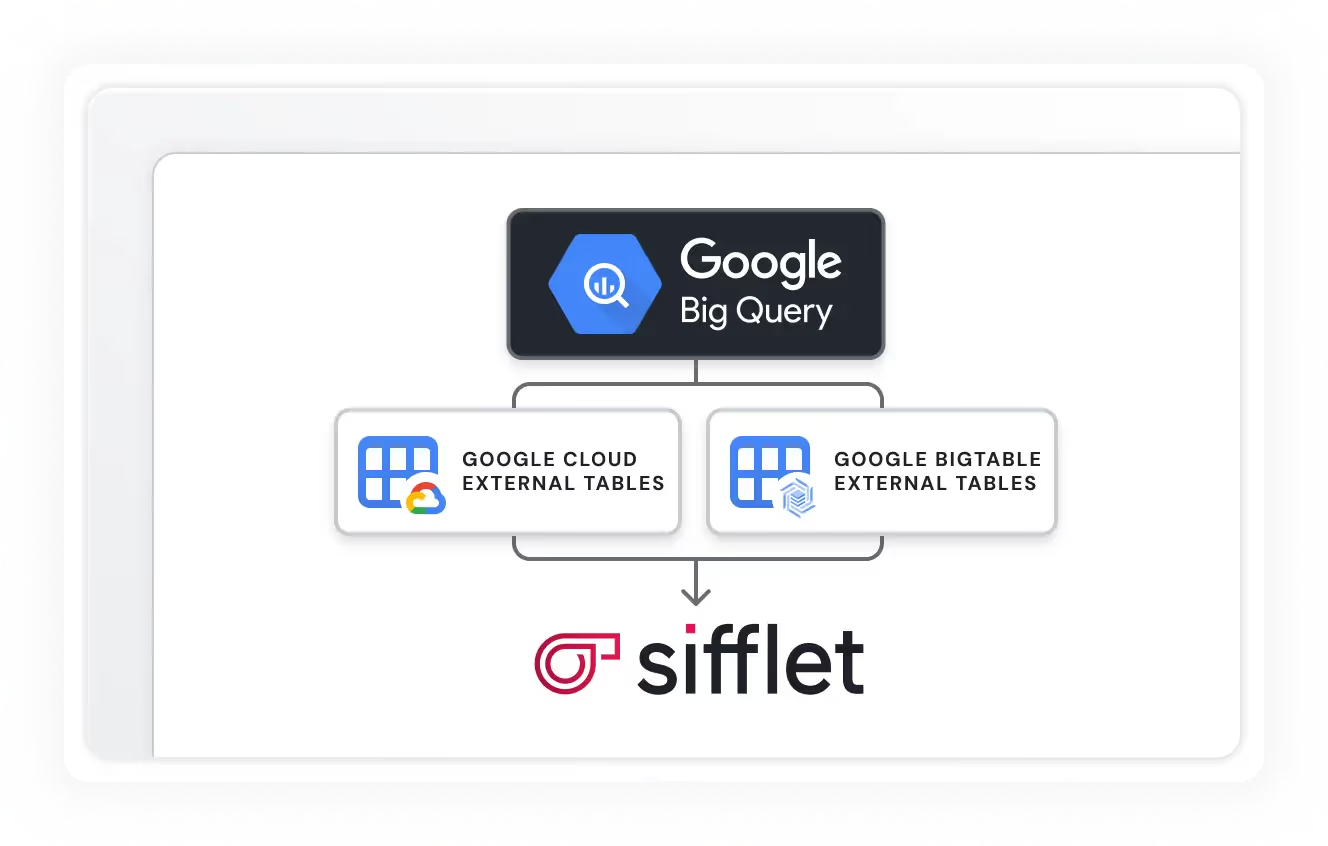

External table support

Sifflet can monitor external BigQuery tables to ensure the quality of data in other systems like Google Cloud BigTable and Google Cloud Storage

Still have a question in mind ?

Contact Us

Frequently asked questions

How does Full Data Stack Observability help improve data quality at scale?

Full Data Stack Observability gives you end-to-end visibility into your data pipeline, from ingestion to consumption. It enables real-time anomaly detection, root cause analysis, and proactive alerts, helping you catch and resolve issues before they affect your dashboards or reports. It's a game-changer for organizations looking to scale data quality efforts efficiently.

Is Sifflet suitable for non-technical users who want to contribute to data quality?

Yes, and that’s one of the things we’re most excited about! Sifflet empowers non-technical users to define custom monitoring rules and participate in data quality efforts without needing to write dbt code. It’s all part of building a culture of shared responsibility around data governance and observability.

How does Sifflet’s Freshness Monitor scale across large data environments?

Sifflet’s Freshness Monitor is designed to scale effortlessly. Thanks to our dynamic monitoring mode and continuous scan feature, you can monitor thousands of data assets without manually setting schedules. It’s a smart way to implement data pipeline monitoring across distributed systems and ensure SLA compliance at scale.

Why is investing in data observability important for business leaders?

Great question! Investing in data observability helps organizations proactively monitor the health of their data, reduce the risk of bad data incidents, and ensure data quality across pipelines. It also supports better decision-making, improves SLA compliance, and helps maintain trust in analytics. Ultimately, it’s a strategic move that protects your business from costly mistakes and missed opportunities.

How do I ensure SLA compliance during a cloud migration?

Ensuring SLA compliance means keeping a close eye on metrics like throughput, resource utilization, and error rates. A robust observability platform can help you track these metrics in real time, so you stay within your service level objectives and keep stakeholders confident.

Why is an observability layer essential in the modern data stack, according to Meero’s experience?

For Meero, having an observability layer like Sifflet was crucial to ensure end-to-end visibility of their data pipelines. It allowed them to proactively monitor data quality, reduce downtime, and maintain SLA compliance, making it an indispensable part of their modern data stack.

Can open-source ETL tools support data observability needs?

Yes, many open-source ETL tools like Airbyte or Talend can be extended to support observability features. By integrating them with a cloud data observability platform like Sifflet, you can add layers of telemetry instrumentation, anomaly detection, and alerting. This ensures your open-source stack remains robust, reliable, and ready for scale.

What’s new with the Distribution Change monitor and how does it improve anomaly detection?

The upgraded Distribution Change monitor now focuses on tracking volume shifts between specific categories, like product lines or customer segments. This makes anomaly detection more precise by reducing noise and highlighting only the changes that truly matter. It's a smarter way to stay on top of data drift and ensure your metrics reflect reality.

-p-500.png)