Redshift

Integrate Sifflet with Redshift to access end-to-end lineage, monitor assets like Spectrum tables, enrich metadata, and gain insights for optimized data observability.

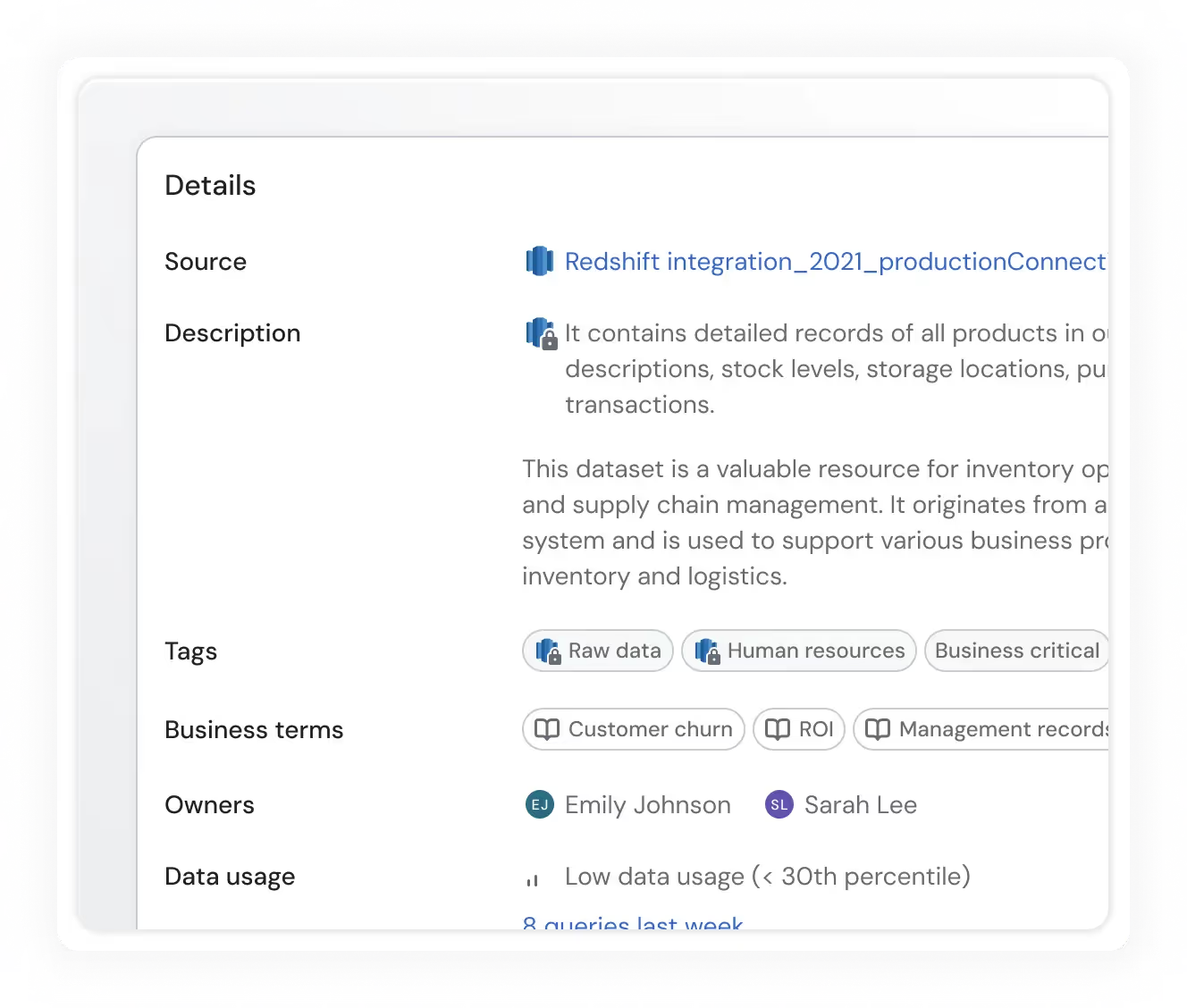

Exhaustive metadata

Sifflet leverages Redshift's internal metadata tables to retrieve information about your assets and enhance it with Sifflet-generated insights.

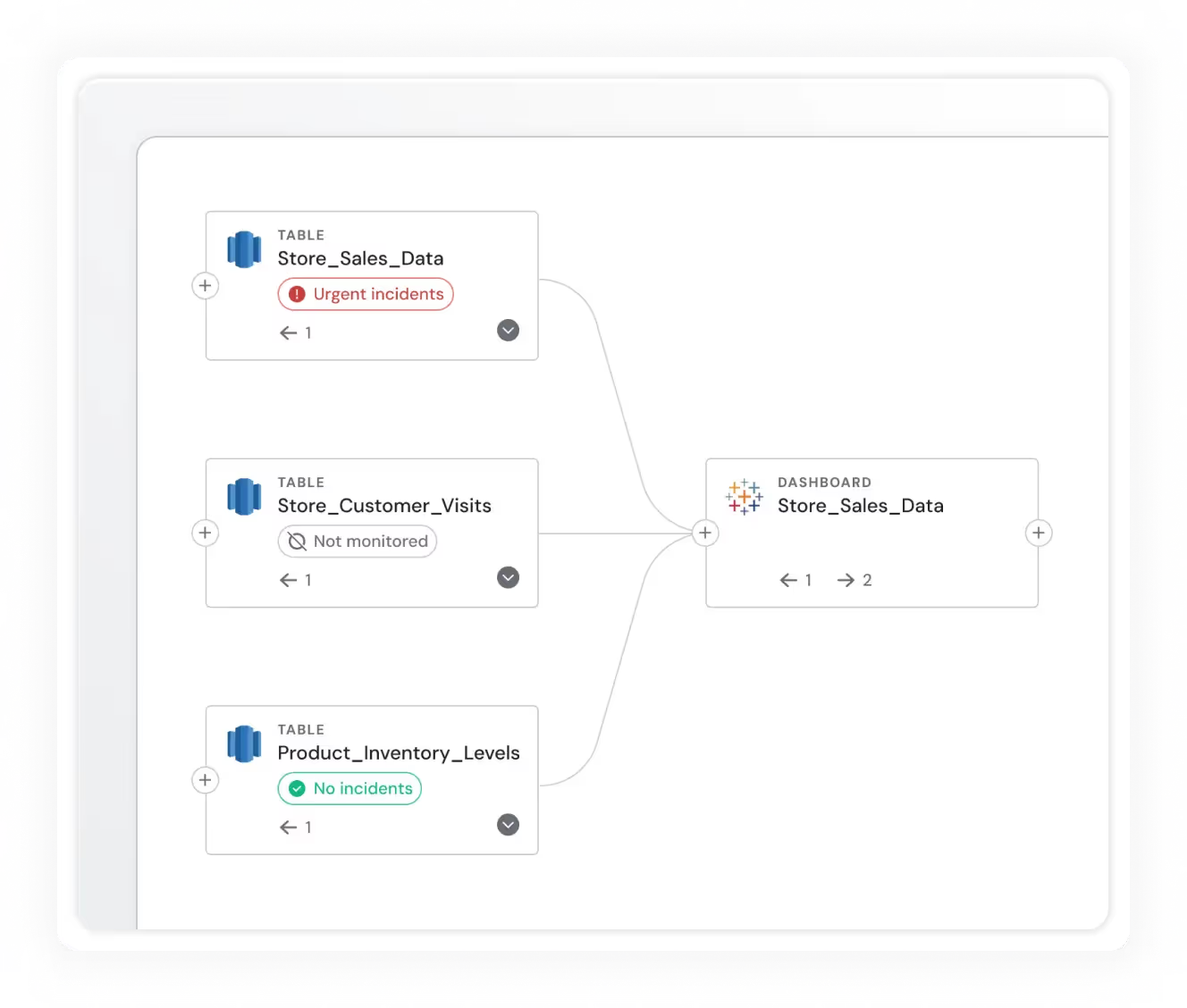

End-to-end lineage

Have a complete understanding of how data flows through your platform via end-to-end lineage for Redshift.

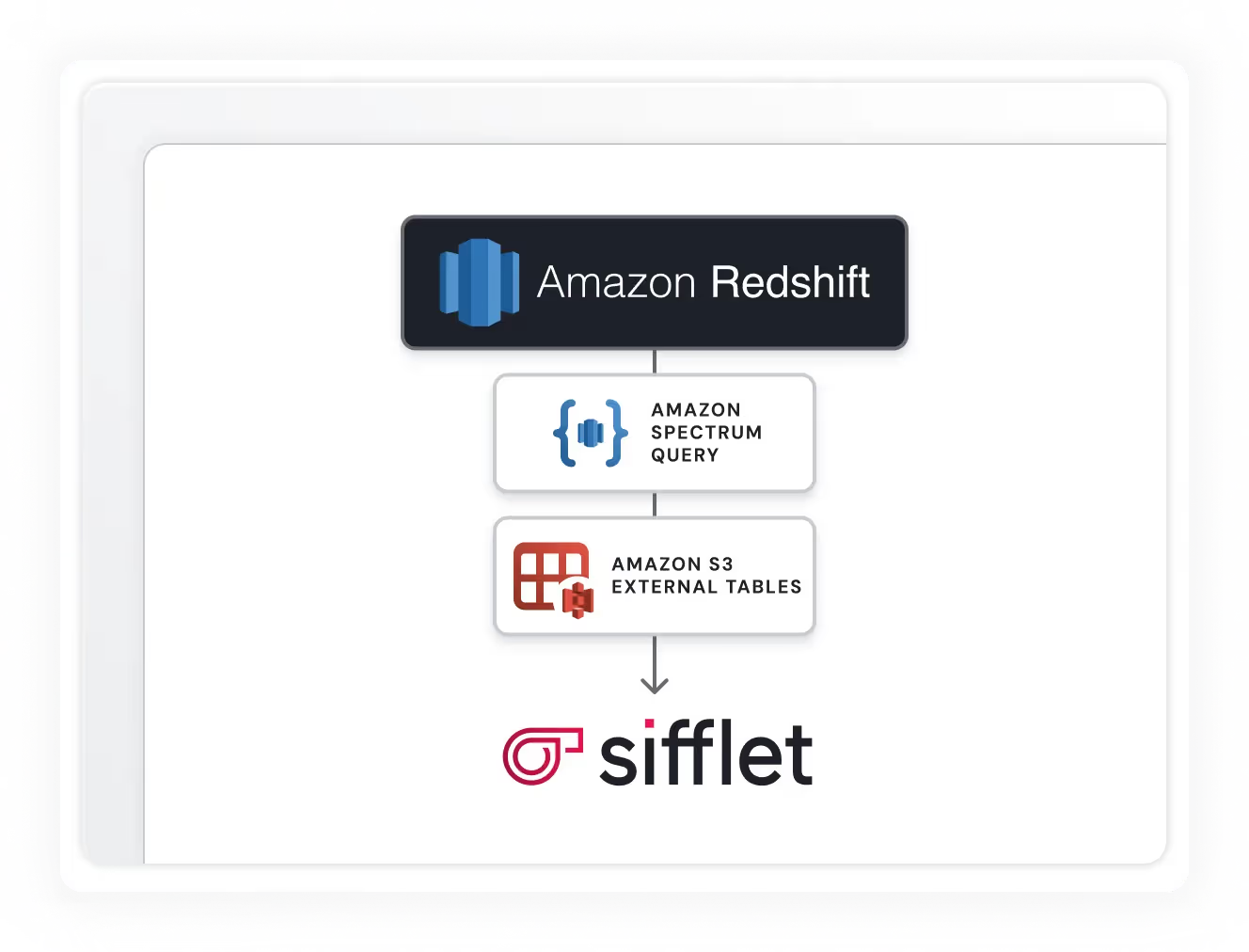

Redshift Spectrum support

Sifflet can monitor external tables via Redshift Spectrum, allowing you to ensure the quality of data stored in other systems like S3.

Still have a question in mind ?

Contact Us

Frequently asked questions

What are some best practices for ensuring data quality during transformation?

To ensure high data quality during transformation, start with strong data profiling and cleaning steps, then use mapping and validation rules to align with business logic. Incorporating data lineage tracking and anomaly detection also helps maintain integrity. Observability tools like Sifflet make it easier to enforce these practices and continuously monitor for data drift or schema changes that could affect your pipeline.

What role does Sifflet’s data catalog play in observability?

Sifflet’s data catalog acts as the central hub for your data ecosystem, enriched with metadata and classification tags. This foundation supports cloud data observability by giving teams full visibility into their assets, enabling better data lineage tracking, telemetry instrumentation, and overall observability platform performance.

What improvements has Sifflet made to incident management workflows?

We’ve introduced Augmented Resolution to help teams group related alerts into a single collaborative ticket, streamlining incident response. Plus, with integrations into your ticketing systems, Sifflet ensures that data issues are tracked, communicated, and resolved efficiently. It’s all part of our mission to boost data reliability and support your operational intelligence.

What role does data observability play in preventing freshness incidents?

Data observability gives you the visibility to detect freshness problems before they impact the business. By combining metrics like data age, expected vs. actual arrival time, and pipeline health dashboards, observability tools help teams catch delays early, trace where things broke down, and maintain trust in real-time metrics.

Which ingestion tools work best with cloud data observability platforms?

Popular ingestion tools like Fivetran, Stitch, and Apache Kafka integrate well with cloud data observability platforms. They offer strong support for telemetry instrumentation, real-time ingestion, and schema registry integration. Pairing them with observability tools ensures your data stays reliable and actionable across your entire stack.

Why is anomaly detection a standout feature for Monte Carlo?

Monte Carlo is known for its zero-config, ML-powered anomaly detection. It starts flagging issues like data drift or schema changes right out of the box, making it ideal for fast deployments. This helps teams reduce alert fatigue and stay ahead of data downtime without deep manual tuning.

Why is data quality monitoring so important for data-driven decision-making, especially in uncertain times?

Great question! Data quality monitoring helps ensure that the data you're relying on is accurate, timely and complete. In high-stress or uncertain situations, poor data can lead to poor decisions. By implementing scalable data quality monitoring, including anomaly detection and data freshness checks, you can avoid the 'garbage in, garbage out' problem and make confident, informed decisions.

What is the difference between data monitoring and data observability?

Great question! Data monitoring is like your car's dashboard—it alerts you when something goes wrong, like a failed pipeline or a missing dataset. Data observability, on the other hand, is like being the driver. It gives you a full understanding of how your data behaves, where it comes from, and how issues impact downstream systems. At Sifflet, we believe in going beyond alerts to deliver true data observability across your entire stack.

-p-500.png)