Cost-efficient data pipelines

Pinpoint cost inefficiencies and anomalies thanks to full-stack data observability.

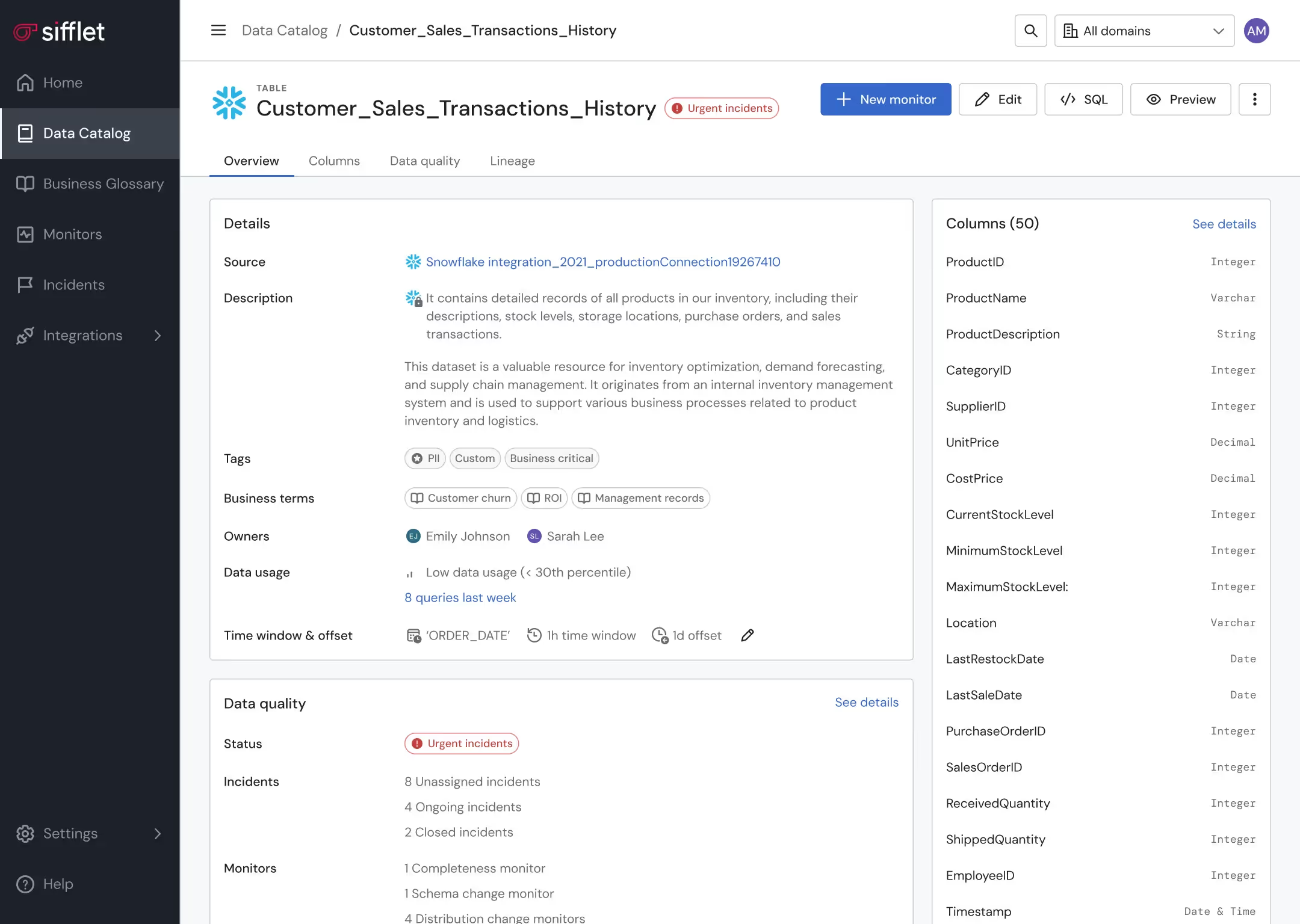

Data asset optimization

- Leverage lineage and Data Catalog to pinpoint underutilized assets

- Get alerted on unexpected behaviors in data consumption patterns

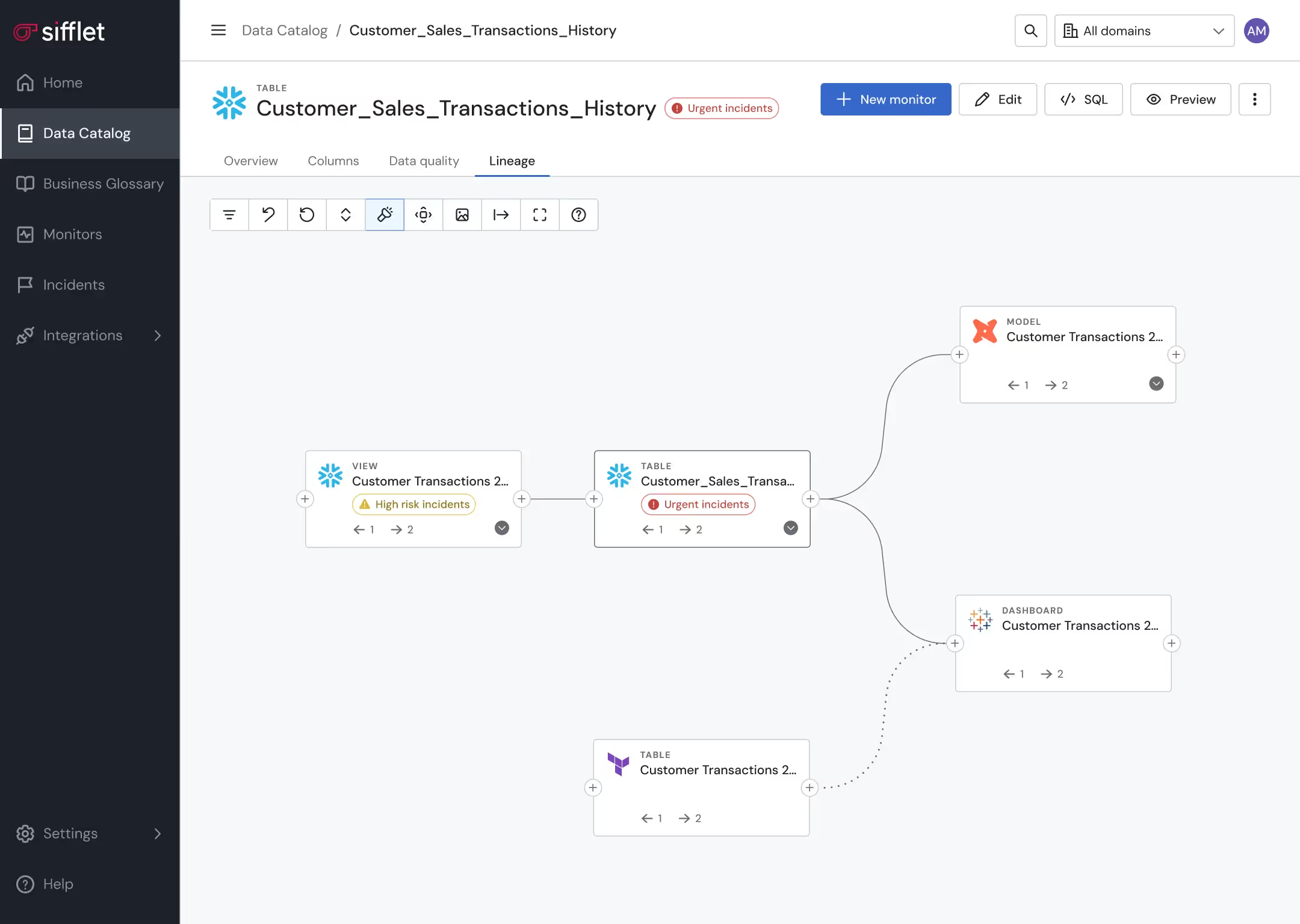

Proactive data pipeline management

Proactively prevent pipelines from running in case a data quality anomaly is detected

Still have a question in mind ?

Contact Us

Frequently asked questions

How does the Model Context Protocol (MCP) improve data observability with LLMs?

Great question! MCP allows large language models to access structured external context like pipeline metadata, logs, and diagnostics tools. At Sifflet, we use MCP to enhance data observability by enabling intelligent agents to monitor, diagnose, and act on issues across complex data pipelines in real time.

How does Forge support incident response automation?

Forge is our resolution agent that turns insights into actions. It recommends specific fixes based on past incidents, and with your approval, it can execute them automatically. Whether it’s retrying a dbt job or running a backfill, Forge reduces manual work and speeds up recovery. It’s a big step forward in incident response automation and keeping your data pipelines healthy.

How did implementing a data observability platform impact Hypebeast’s operations?

After adopting Sifflet’s observability platform, Hypebeast saw a 204% improvement in data quality, a 178% increase in data product delivery, and a 75% boost in ad hoc request speed. These gains translated into faster, more reliable insights and better collaboration across departments.

How can data observability help reduce data entropy?

Data entropy refers to the chaos and disorder in modern data environments. A strong data observability platform helps reduce this by providing real-time metrics, anomaly detection, and data lineage tracking. This gives teams better visibility across their data pipelines and helps them catch issues early before they impact the business.

How does data quality monitoring help prevent downstream issues?

Data quality monitoring plays a crucial role in catching issues like null values, schema mismatches, or unexpected patterns before they reach dashboards or machine learning models. With intelligent anomaly detection and automated rule suggestions, platforms like Sifflet make it easier to maintain high data reliability at scale.

How does Acceldata support data pipeline monitoring in complex environments?

Acceldata is built for enterprises with hybrid or multi-system environments. It offers deep data pipeline monitoring by tracking everything from infrastructure health to storage and compute usage. This full-stack approach helps teams detect issues early, manage cost, and ensure SLA compliance across sprawling data ecosystems.

What role does MCP play in improving incident response automation?

MCP is a game-changer for incident response automation. By allowing LLMs to interact with telemetry data, call remediation tools, and maintain context over time, MCP enables proactive monitoring and faster resolution. This aligns perfectly with Sifflet’s mission to reduce downtime and improve pipeline resilience.

What role does MCP play in improving data quality monitoring?

MCP enables LLMs to access structured context like schema changes, validation rules, and logs, making it easier to detect and explain data quality issues. With tool calls and memory, agents can continuously monitor pipelines and proactively alert teams when data quality deteriorates. This supports better SLA compliance and more reliable data operations.

-p-500.png)