Cost-efficient data pipelines

Pinpoint cost inefficiencies and anomalies thanks to full-stack data observability.

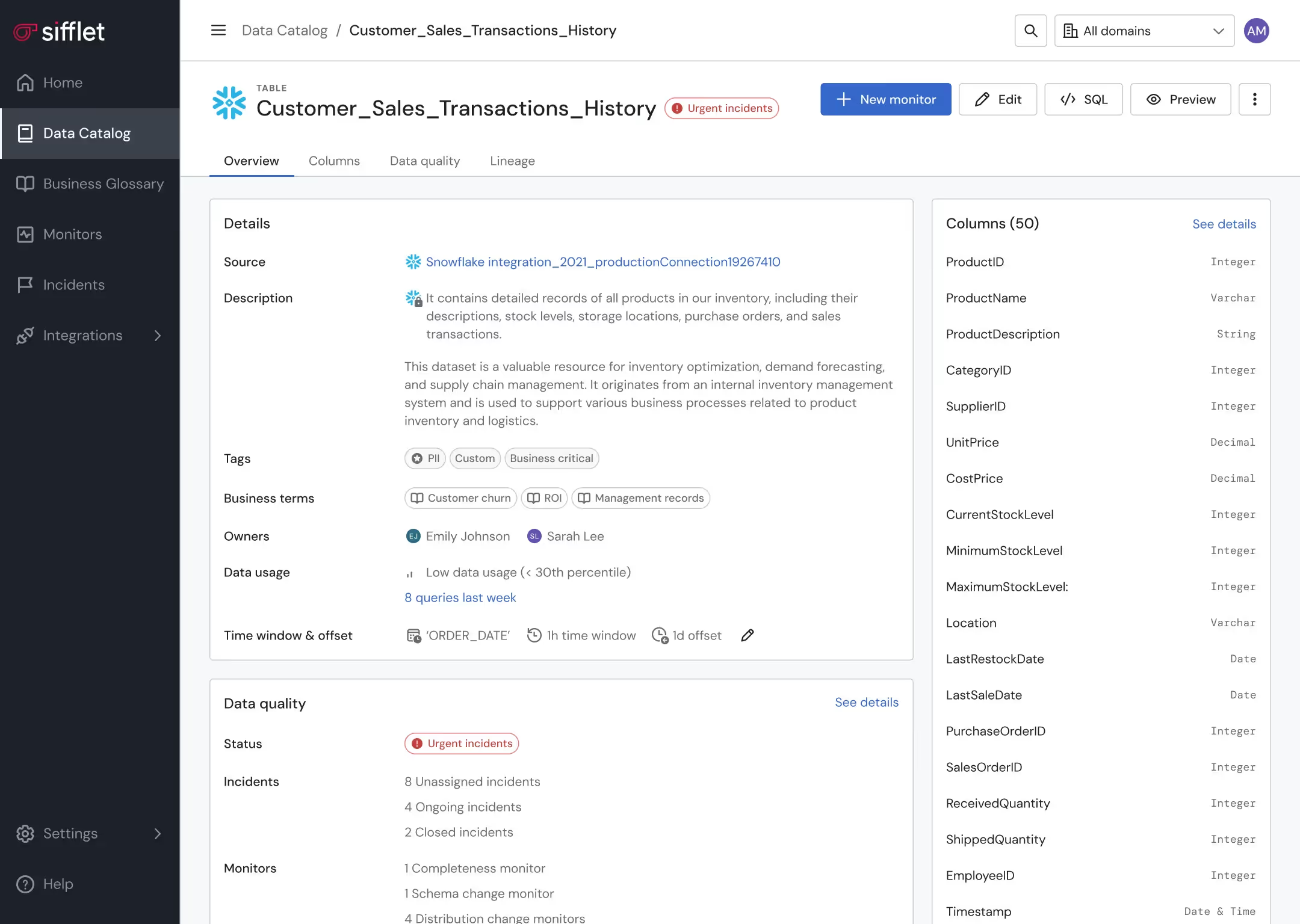

Data asset optimization

- Leverage lineage and Data Catalog to pinpoint underutilized assets

- Get alerted on unexpected behaviors in data consumption patterns

Proactive data pipeline management

Proactively prevent pipelines from running in case a data quality anomaly is detected

Still have a question in mind ?

Contact Us

Frequently asked questions

Can classification tags improve data pipeline monitoring?

Absolutely! By tagging fields like 'Low Cardinality', data teams can quickly identify which fields are best suited for specific monitors. This enables more targeted data pipeline monitoring, making it easier to detect anomalies and maintain SLA compliance across your analytics pipeline.

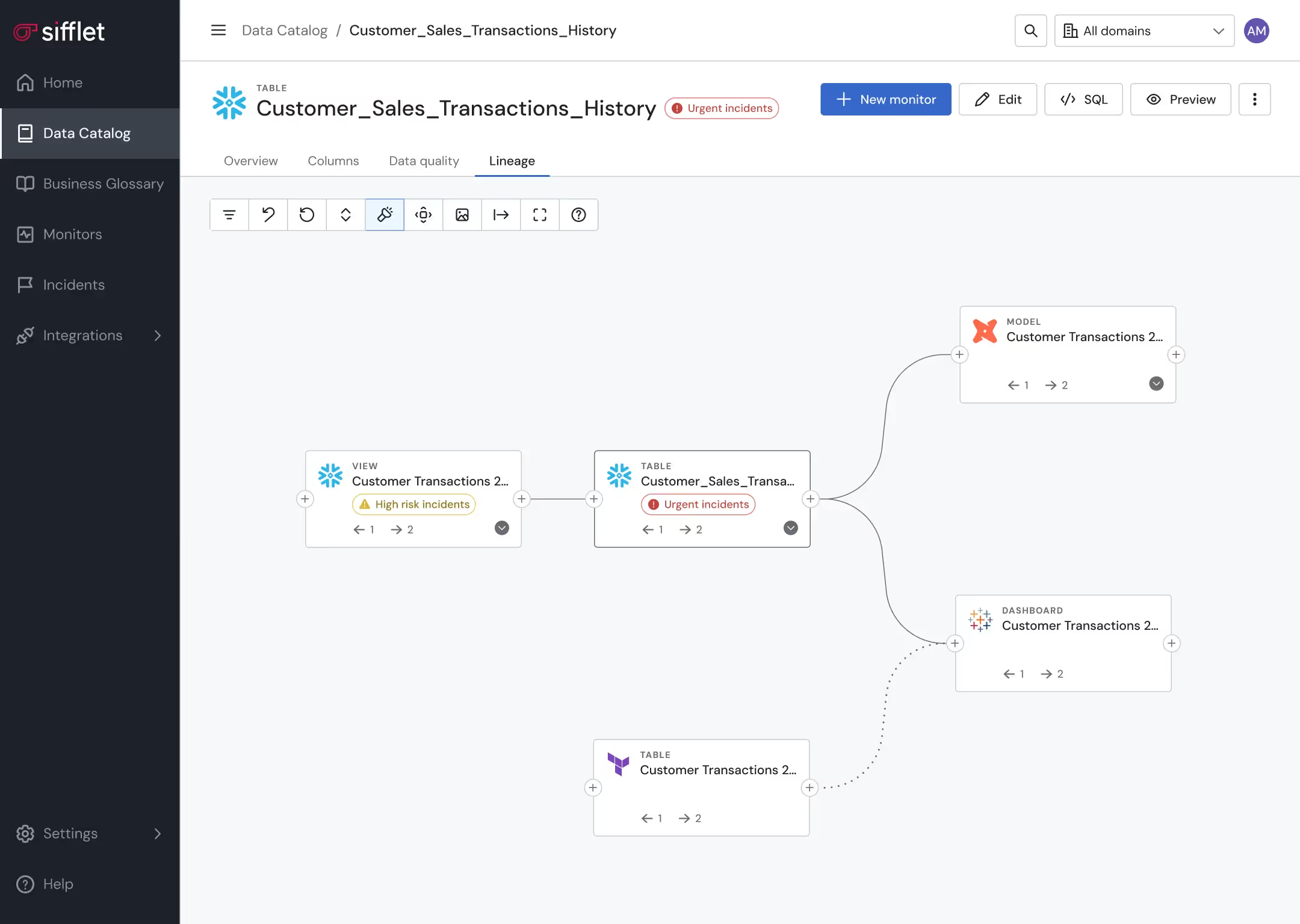

What is data lineage and why does it matter for modern data teams?

Data lineage is the process of mapping the journey of data from its origin to its final destination, including all the transformations it undergoes. It's essential for data pipeline monitoring and root cause analysis because it helps teams quickly identify where data issues originate, saving time and reducing stress under pressure.

Can I see how a business metric is calculated in Sifflet?

Absolutely! With Sifflet’s data lineage tracking, users can view the full column-level lineage from ingestion to consumption. This transparency helps users understand how each metric is computed and how it relates to other data or metrics in the pipeline.

How can organizations choose the right observability tools for their data stack?

Choosing the right observability tools depends on your data maturity and stack complexity. Look for platforms that offer comprehensive data quality monitoring, support for both batch and streaming data, and features like data lineage tracking and alert correlation. Platforms like Sifflet provide end-to-end visibility, making it easier to maintain SLA compliance and reduce incident response times.

How does Sifflet help reduce AI bias and improve model fairness?

Reducing AI bias starts with understanding your data. Sifflet’s observability platform gives you deep visibility into data sources, transformations, and quality. By tracking data lineage and applying data profiling, teams can identify and correct biased inputs before they affect model outcomes. This transparency helps build more ethical and reliable AI systems.

Why is data quality such a critical part of a data governance strategy?

Great question! Data quality is one of the foundational pillars of a strong data governance strategy because it directly impacts decision-making, compliance, and trust in your data. Poor data quality can lead to biased AI models, flawed analytics, and even regulatory risk. That's why integrating data quality monitoring early in your data lifecycle is key to building a reliable and responsible data foundation.

What is the Model Context Protocol (MCP), and why is it important for data observability?

The Model Context Protocol (MCP) is a new interface standard developed by Anthropic that allows large language models (LLMs) to interact with tools, retain memory, and access external context. At Sifflet, we're excited about MCP because it enables more intelligent agents that can help with data observability by diagnosing issues, triggering remediation tools, and maintaining context across long-running investigations.

How does Sifflet support data lineage tracking and context enrichment?

Sifflet enhances your data catalog with lineage tracking and context by incorporating dbt model descriptions, input-output dataset views, and AI-powered recommendations. This enrichment helps users quickly understand where data comes from and how it's used, making it easier to trust and leverage data confidently.

-p-500.png)