Cost-efficient data pipelines

Pinpoint cost inefficiencies and anomalies thanks to full-stack data observability.

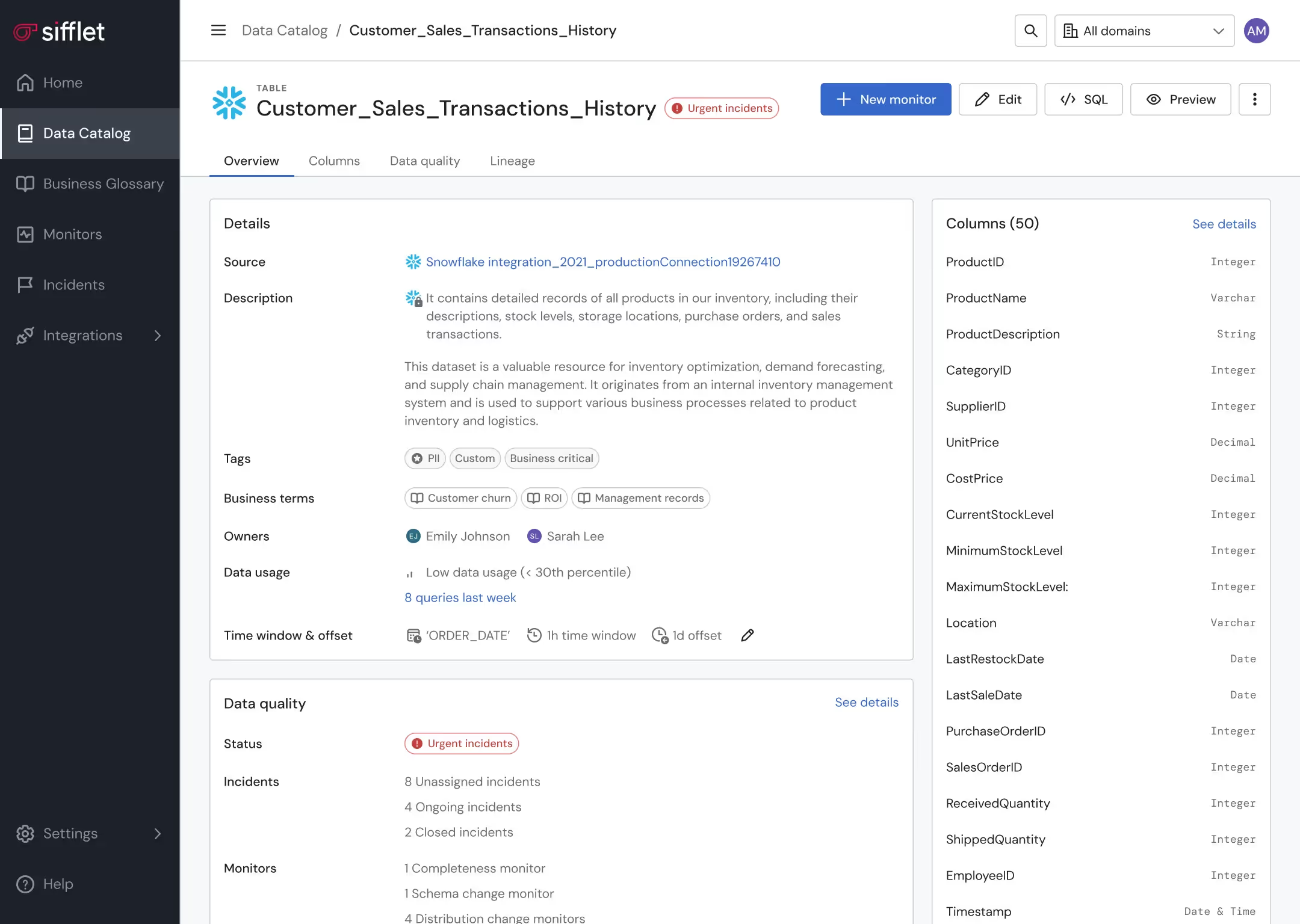

Data asset optimization

- Leverage lineage and Data Catalog to pinpoint underutilized assets

- Get alerted on unexpected behaviors in data consumption patterns

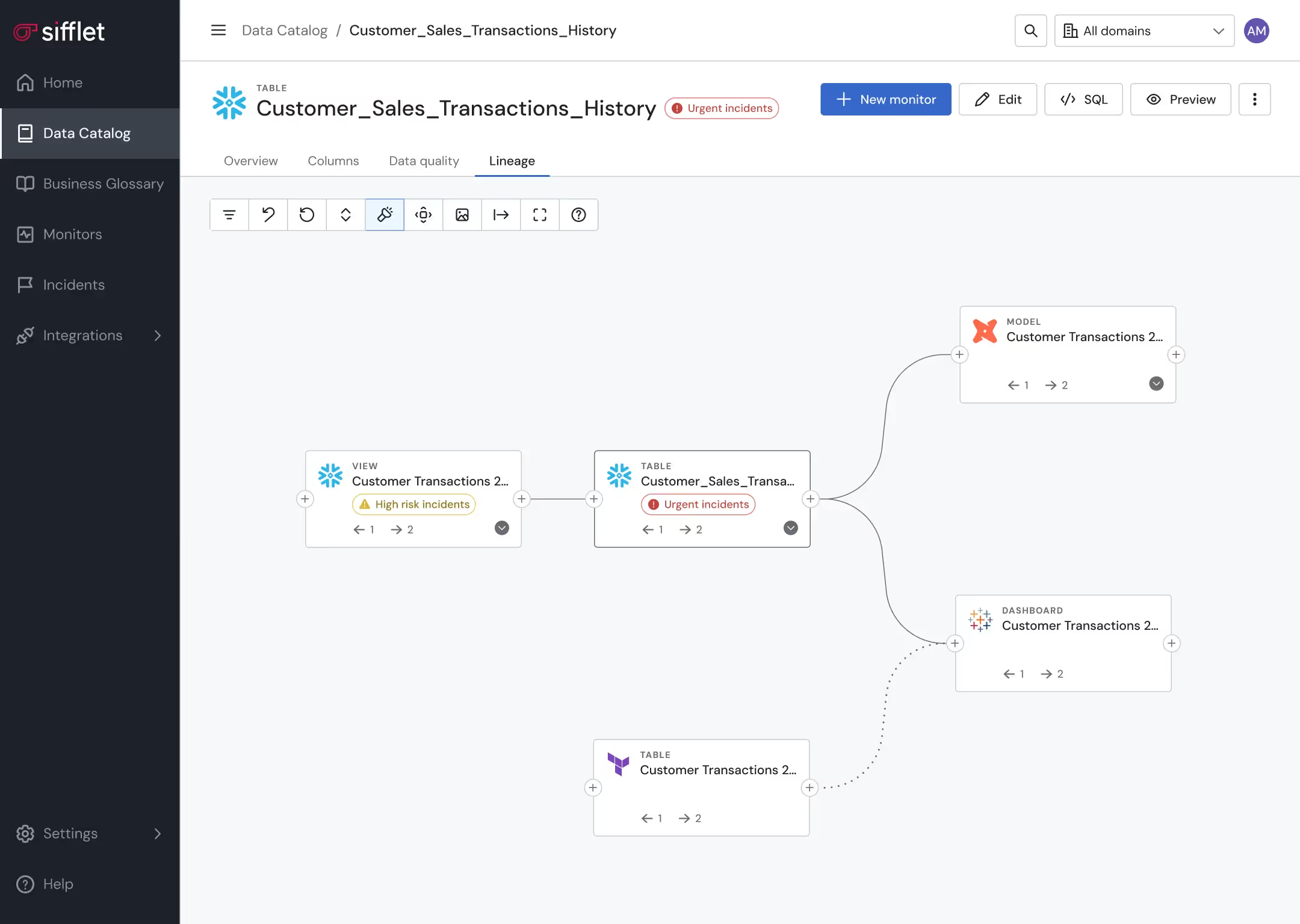

Proactive data pipeline management

Proactively prevent pipelines from running in case a data quality anomaly is detected

Still have a question in mind ?

Contact Us

Frequently asked questions

How does Dailymotion foster a strong data culture beyond just using observability tools?

They’ve implemented a full enablement program with starter kits, trainings, and office hours to build data literacy and trust. Observability tools are just one part of the equation; the real focus is on enabling confident, autonomous decision-making across the organization.

What is SQL Table Tracer and how does it help with data lineage tracking?

SQL Table Tracer (STT) is a lightweight library that automatically extracts table-level lineage from SQL queries. It identifies both destination and upstream tables, making it easier to understand data dependencies and build reliable data lineage workflows. This is a key component of any effective data observability strategy.

How do I ensure SLA compliance during a cloud migration?

Ensuring SLA compliance means keeping a close eye on metrics like throughput, resource utilization, and error rates. A robust observability platform can help you track these metrics in real time, so you stay within your service level objectives and keep stakeholders confident.

What challenges did Hypebeast face when transitioning to full-scale data observability?

One major challenge was shifting the company culture from being data-aware to truly data-driven. Technically, integrating new observability tools into existing infrastructures and managing the initial investment in time and resources also posed hurdles.

How does data observability improve the value of a data catalog?

Data observability enhances a data catalog by adding continuous monitoring, data lineage tracking, and real-time alerts. This means organizations can not only find their data but also trust its accuracy, freshness, and consistency. By integrating observability tools, a catalog becomes part of a dynamic system that supports SLA compliance and proactive data governance.

What role does containerization play in data observability?

Containerization enhances data observability by enabling consistent and isolated environments, which simplifies telemetry instrumentation and anomaly detection. It also supports better root cause analysis when issues arise in distributed systems or microservices architectures.

Why is data observability gaining momentum now, even though software observability has been around for a while?

Great question! Software observability took off in the 2010s with the rise of cloud-native apps, but data observability is catching up fast. As businesses start treating data as a mission-critical asset—especially with the growth of AI and cloud data platforms like Snowflake—the need for real-time visibility, data reliability, and governance has become urgent. We're in the early innings, but the pace is accelerating quickly.

What are some common consequences of bad data?

Bad data can lead to a range of issues including financial losses, poor strategic decisions, compliance risks, and reduced team productivity. Without proper data quality monitoring, companies may struggle with inaccurate reports, failed analytics, and even reputational damage. That’s why having strong data observability tools in place is so critical.

-p-500.png)