Coverage without compromise.

Grow monitoring coverage intelligently as your stack scales and do more with less resources thanks to tooling that reduces maintenance burden, improves signal-to-noise, and helps you understand impact across interconnected systems.

Don’t Let Scale Stop You

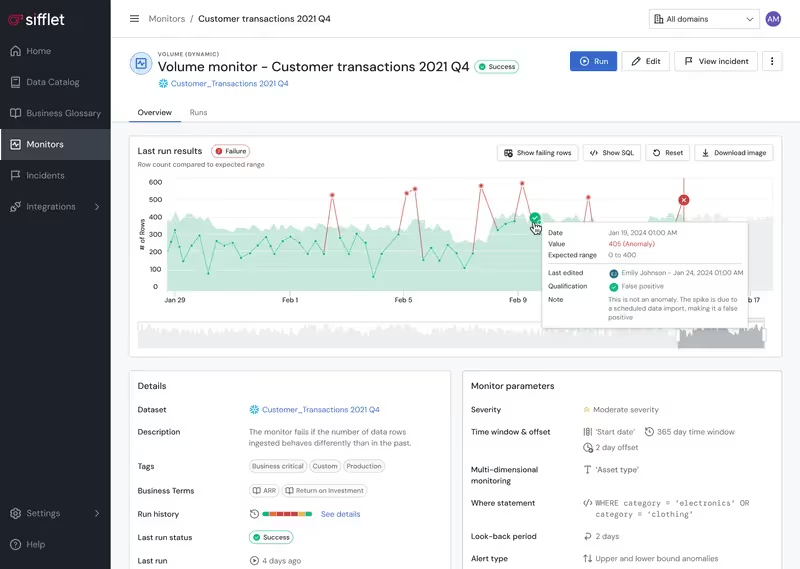

As your stack and data assets scale, so do monitors. Keeping rules updated becomes a full-time job, and tribal knowledge about monitors gets scattered, so teams struggle to sunset obsolete monitors while adding new ones. No more with Sifflet.

- Optimize monitoring coverage and minimize noise levels with AI-powered suggestions and supervision that adapt dynamically

- Implement programmatic monitoring set up and maintenance with Data Quality as Code (DQaC)

- Automated monitor creation and updates based on data changes

- Centralized monitor management reduces maintenance overhead

Get Clear and Consistent

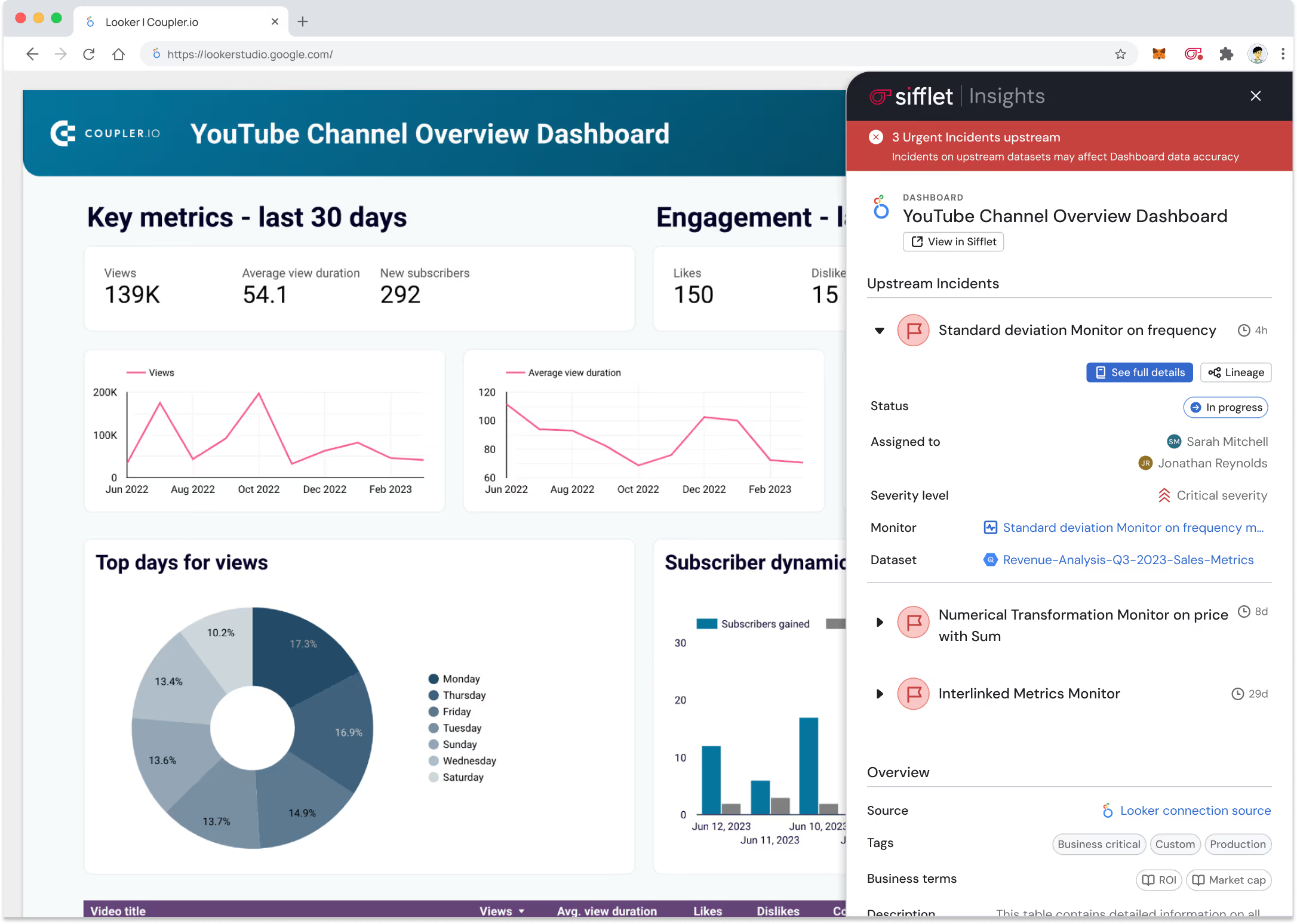

Maintaining consistent monitoring practices across tools, platforms, and internal teams that work across different parts of the stack isn’t easy. Sifflet makes it a breeze.

- Set up consistent alerting and response workflows

- Benefit from unified monitoring across your platforms and tools

- Use automated dependency mapping to show system relationships and benefit from end-to-end visibility across the entire data pipeline

Still have a question in mind ?

Contact Us

Frequently asked questions

What role does anomaly detection play in modern data contracts?

Anomaly detection helps identify unexpected changes in data that might signal contract violations or semantic drift. By integrating predictive analytics monitoring and dynamic thresholding into your observability platform, you can catch issues before they break dashboards or compromise AI models. It’s a core feature of a resilient, intelligent metadata layer.

Can open-source ETL tools support data observability needs?

Yes, many open-source ETL tools like Airbyte or Talend can be extended to support observability features. By integrating them with a cloud data observability platform like Sifflet, you can add layers of telemetry instrumentation, anomaly detection, and alerting. This ensures your open-source stack remains robust, reliable, and ready for scale.

What makes Sifflet different from other data observability tools?

Sifflet stands out as a metadata control plane that connects technical reliability with business context. Unlike point solutions, it offers AI-native automation, full data lineage tracking, and cross-functional accessibility, making it ideal for organizations that need to scale trust in their data across teams.

What role does metadata tagging play in building a strong data monitoring strategy?

Metadata tagging is the signal layer behind effective monitoring. By tagging datasets with key attributes like ownership, business domain, and SLA tiers, you give your observability tools the context they need to prioritize alerts, enforce data contracts, and maintain SLA compliance. At Sifflet, we help automate and validate tagging to keep your monitoring strategy robust and scalable.

Is Sifflet suitable for non-technical users who want to contribute to data quality?

Yes, and that’s one of the things we’re most excited about! Sifflet empowers non-technical users to define custom monitoring rules and participate in data quality efforts without needing to write dbt code. It’s all part of building a culture of shared responsibility around data governance and observability.

Why is data observability more than just monitoring?

Great question! At Sifflet, we believe data observability is about operationalizing trust, not just catching issues. It’s the foundation for reliable data pipelines, helping teams ensure data quality, track lineage, and resolve incidents quickly so business decisions are always based on trustworthy data.

How does Sifflet help ensure SLA compliance and data reliability?

Sifflet supports SLA compliance by continuously monitoring key data quality metrics and surfacing issues before they impact business decisions. With automated anomaly detection, real-time alerts, and root cause analysis, our observability platform helps teams maintain data reliability and stay ahead of potential SLA breaches.

What exactly is data freshness, and why does it matter so much in data observability?

Data freshness refers to how current your data is relative to the real-world events it's meant to represent. In data observability, it's one of the most critical metrics because even accurate data can lead to poor decisions if it's outdated. Whether you're monitoring financial trades or patient records, stale data can have serious business consequences.

-p-500.png)