When a fire starts, the flames travel at a general maximum speed of 16 to 20 km/hour to ignite adjacent objects and continue to burn until the fire is actively extinguished or oxygen is exhausted. Now imagine that the fire is a broken pipeline that, if unattended to, will spread flames across your entire data value chain, burning resources and, along with them, your precious production data, including the CEO’s favorite dashboard. You want to play firefighter and stop the damage from spreading, don't you? Well, keep on reading.

We are excited to announce that our Flow Stopper feature, previously in PP (private preview), is now generally available! Flow Stopper is an innovative way to stop vulnerable pipelines from running at the orchestration layer before the damage moves to the production layer giving Data teams even more tools to get proactive with Data Reliability under Sifflet Full Data Stack Observability framework.

How does it work?

Sifflet allows automated coverage of your most critical Data Assets with fundamental Data Quality rules thanks to the Auto-coverage feature. If you are looking for something more specific to your business, our 50+ templates allow you to cover even the most unorthodox use cases.

Next, Sifflet will connect to your orchestrator of choice, either through a built-in connector or via CLI. The rule will then be inserted and the test executed at the pipeline level giving Data Engineers a chance to pause the pipeline in case of anomaly and troubleshoot it before it further propagates and becomes a business problem.

More details can be found in our technical documentation or on demand.

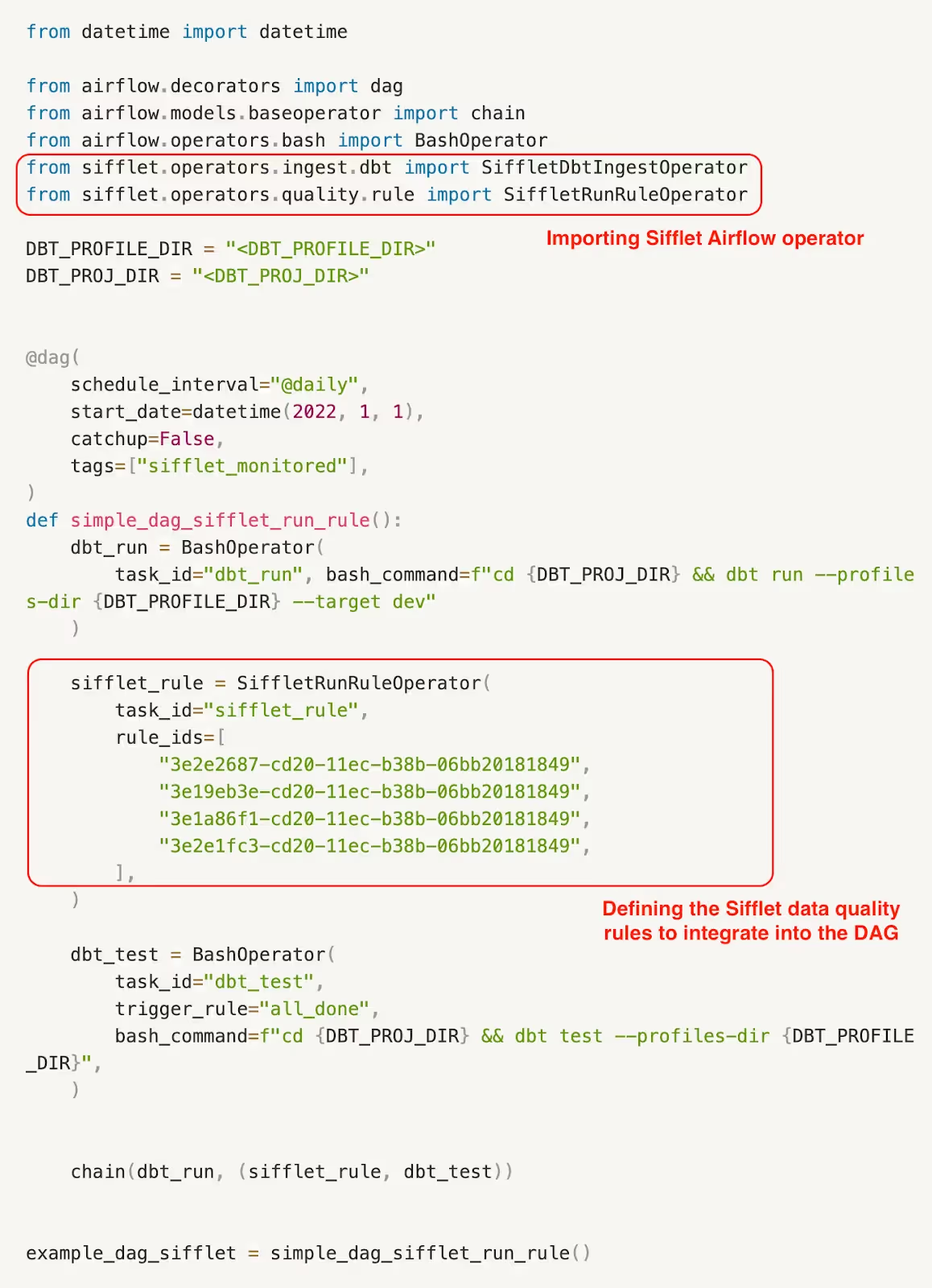

A simple illustrative example:

In the following example, we will be using:

- Dbt Core: for data transformation and documentation

- Snowflake: for data storage

- Airflow: for orchestration

The plan is to:

- Upload the data from a csv file into Snowflake

- Transform the data with a dbt model and ingest it into a Snowflake table

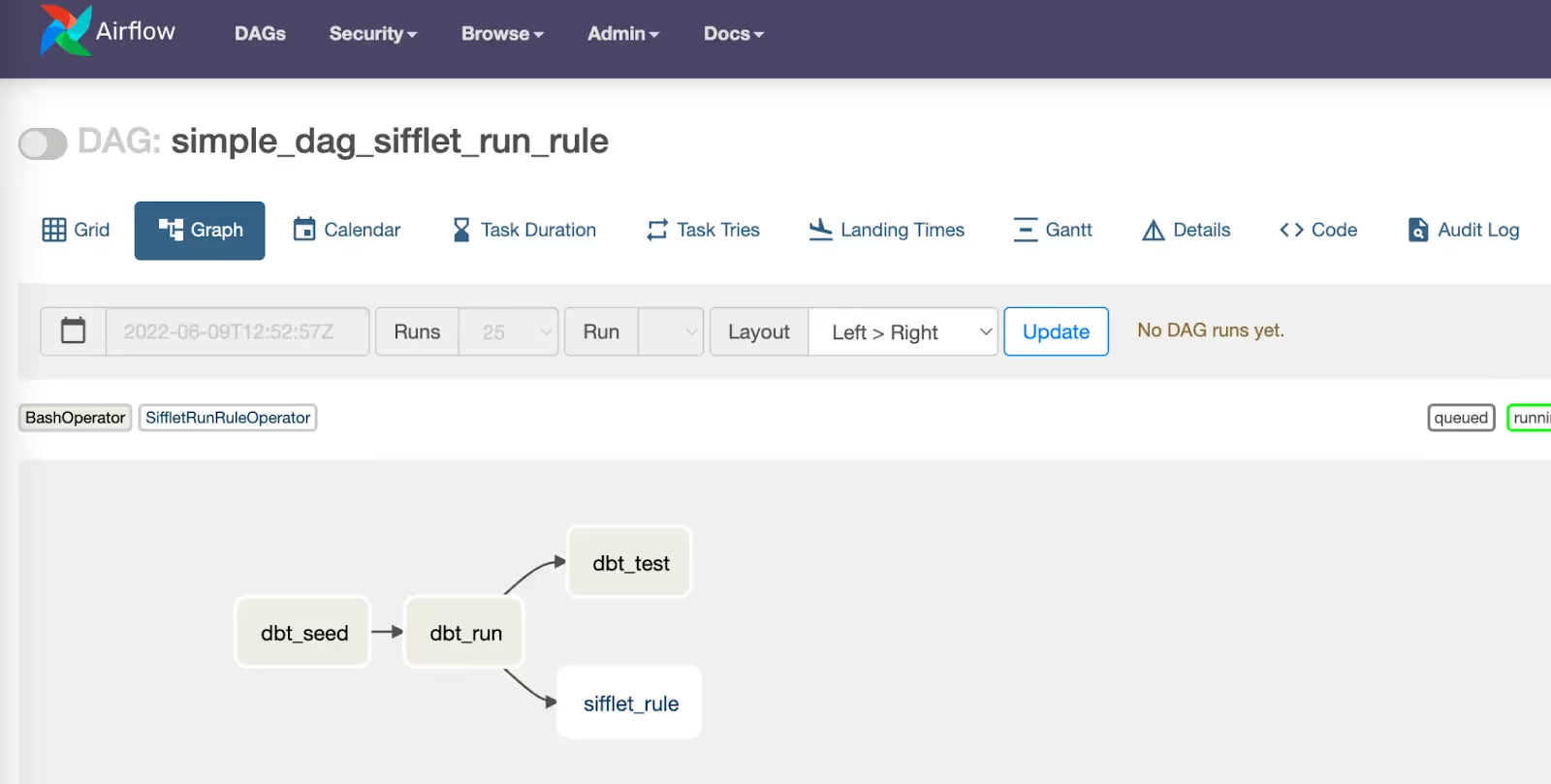

- Run data quality checks on the new data transformed. In this example, we choose to illustrate a use case where the user is leveraging both dbt tests and a Sifflet ML-based DQ rule, both of which Sifflet is able to capture. The idea is to monitor the data while it is being transformed in the running pipeline which translates into the following DAG:

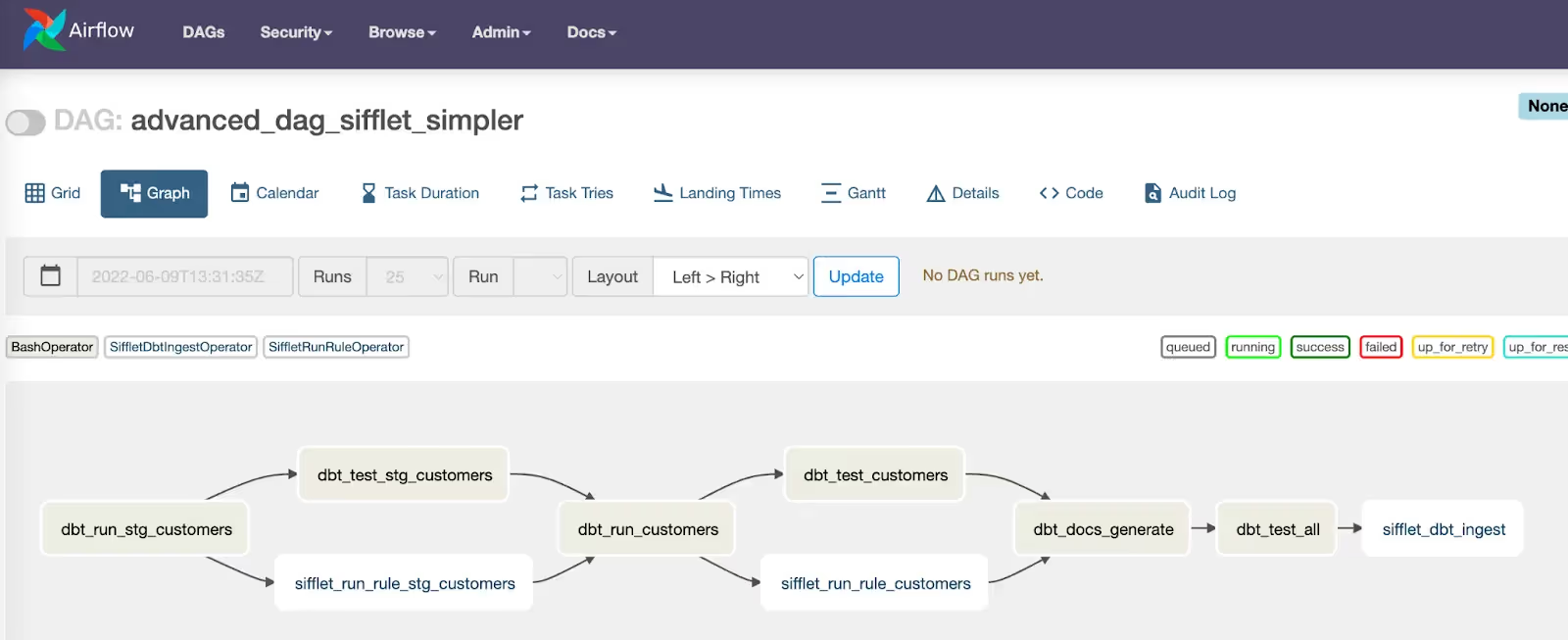

The following is a more complex scenario where Sifflet Flow Stopper is used to test the data while transiting from staging to production. At every step of the DAG, users can choose to stop the flow once an anomaly is detected.

Why is it important?

According to research done by Wakefield, Data Engineers spend 44% of their time maintaining and troubleshooting Data Pipelines.

A Data Engineer who spends nearly half of their time dealing with broken pipelines is:

- Not focusing on other more innovative ways to make the company data-driven

- Not having the best interactions with data consumers and other stakeholders

- Not enjoying the job and is likely to leave the company

- Etc.

The company this Data Engineer works for is:

- Not allocating resources properly

- Not shipping digital innovative products fast enough to remain competitive

- Likely dealing with elevated hard-to-explain infrastructure costs

- Not a true Data-Driven Company

- Etc.

This feature is a way for Data Engineers to integrate tests into their pipelines at the orchestrator level to ensure continuous monitoring and avoid business repercussions and infrastructure costs that can arise from a running broken pipeline. Reach out for more details contact@siffletdata.com

-p-500.png)