Cost Observability

Cost-efficient data pipelines

Pinpoint cost inefficiencies and anomalies thanks to full-stack data observability.

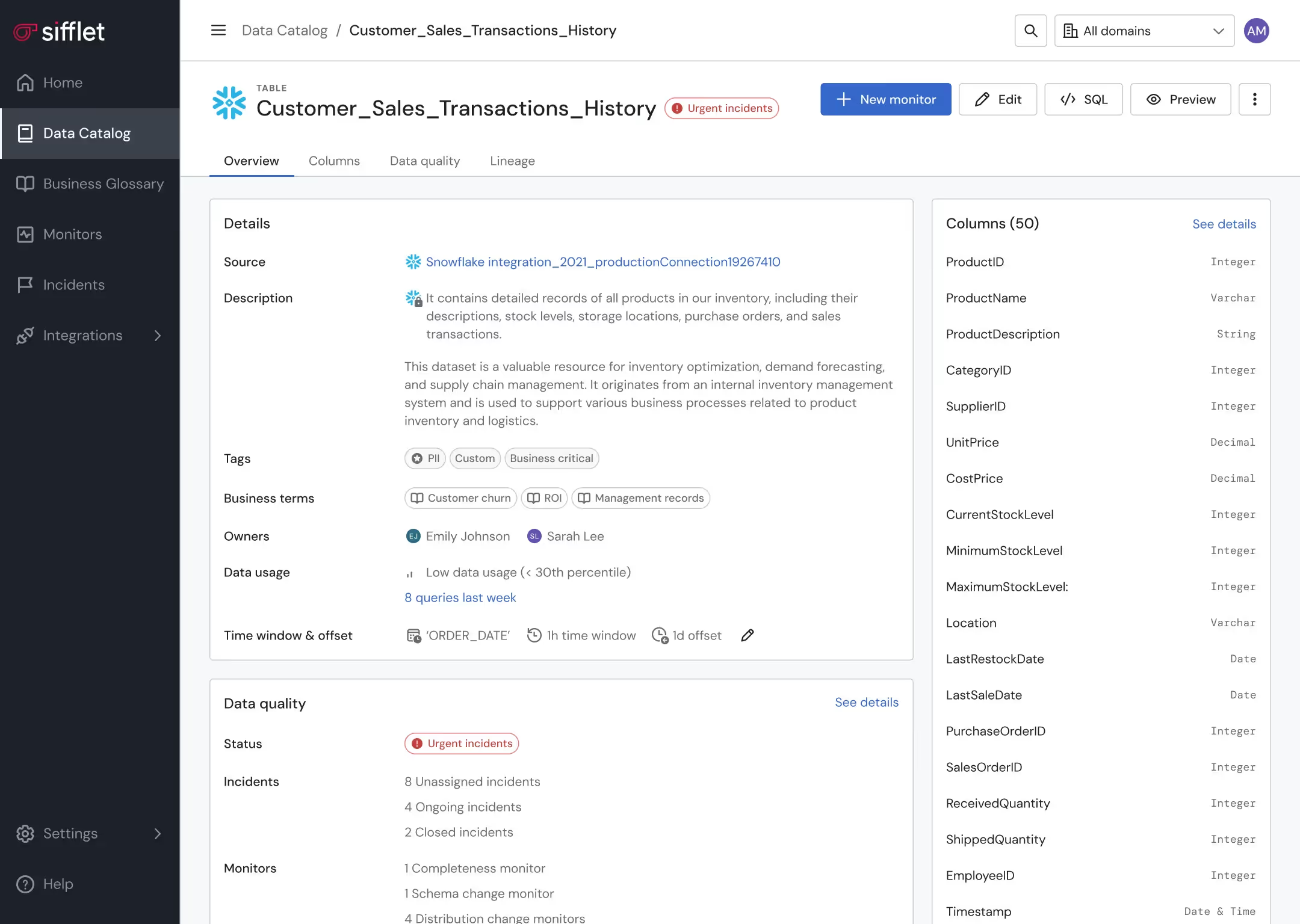

Data asset optimization

- Leverage lineage and Data Catalog to pinpoint underutilized assets

- Get alerted on unexpected behaviors in data consumption patterns

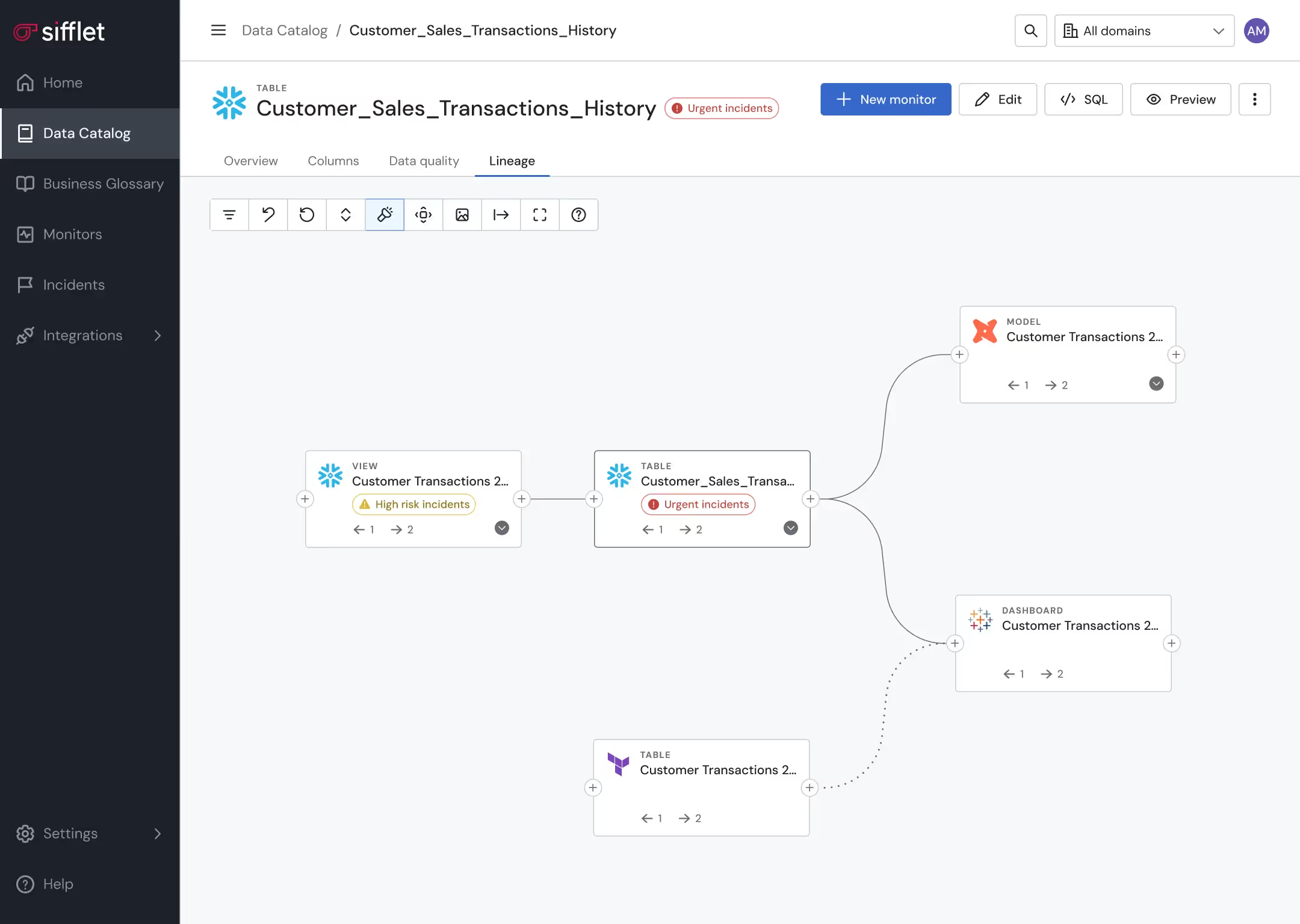

Proactive data pipeline management

Proactively prevent pipelines from running in case a data quality anomaly is detected

Frequently asked questions

How does data observability improve the value of a data catalog?

Data observability enhances a data catalog by adding continuous monitoring, data lineage tracking, and real-time alerts. This means organizations can not only find their data but also trust its accuracy, freshness, and consistency. By integrating observability tools, a catalog becomes part of a dynamic system that supports SLA compliance and proactive data governance.

How does SQL Table Tracer handle complex SQL features like CTEs and subqueries?

SQL Table Tracer uses a Monoid-based design to handle complex SQL structures like Common Table Expressions (CTEs) and subqueries. This approach allows it to incrementally and safely compose lineage information, ensuring accurate root cause analysis and data drift detection.

What kind of usage insights can I get from Sifflet to optimize my data resources?

Sifflet helps you identify underused or orphaned data assets through lineage and usage metadata. By analyzing this data, you can make informed decisions about deprecating unused tables or enhancing monitoring for critical pipelines. It's a smart way to improve pipeline resilience and reduce unnecessary costs in your data ecosystem.

How does data observability differ from traditional data quality monitoring?

Great question! Traditional data quality monitoring focuses on pre-defined rules and tests, but it often falls short when unexpected issues arise. Data observability, on the other hand, provides end-to-end visibility using telemetry instrumentation like metrics, metadata, and lineage. This makes it possible to detect anomalies in real time and troubleshoot issues faster, even in complex data environments.

What trends are driving the demand for centralized data observability platforms?

The growing complexity of data products, especially with AI and real-time use cases, is driving the need for centralized data observability platforms. These platforms support proactive monitoring, root cause analysis, and incident response automation, making it easier for teams to maintain data reliability and optimize resource utilization.

Why is semantic quality monitoring important for AI applications?

Semantic quality monitoring ensures that the data feeding into your AI models is contextually accurate and production-ready. At Sifflet, we're making this process seamless with tools that check for data drift, validate schema, and maintain high data quality without manual intervention.

What’s new with the Distribution Change monitor and how does it improve anomaly detection?

The upgraded Distribution Change monitor now focuses on tracking volume shifts between specific categories, like product lines or customer segments. This makes anomaly detection more precise by reducing noise and highlighting only the changes that truly matter. It's a smarter way to stay on top of data drift and ensure your metrics reflect reality.

Can Sifflet help me monitor data drift and anomalies beyond what dbt offers?

Absolutely! While dbt is fantastic for defining tests, Sifflet takes it further with advanced data drift detection and anomaly detection. Our platform uses intelligent monitoring templates that adapt to your data’s behavior, so you can spot unexpected changes like missing rows or unusual values without setting manual thresholds.

-p-500.png)