The modern data stack or the Data Stack is a collection of cloud-native applications that serve as the foundation for an enterprise data infrastructure. The concept of modern data stack has been quickly gaining popularity and has become the de facto way for organizations of various sizes to extract value from data. Like an industrial value chain, the modern data stack follows a logic of ingesting, transforming, storing, and productizing data.

This blog is an introduction to the modern data stack, and in the following articles, we will dive into each of the different components.

What is the modern data stack?

The modern data stack can be defined as a collection of different technologies used to transform raw data into actionable business insights. The infrastructure that derives from these various tools can be used to reduce the complexity of managing a data platform while ensuring that the data is exploited to its fullest. Companies can adopt the tools that best fit their needs, and different types of layers can be added depending on the use case.

A brief history of the modern data stack

For decades, on-premise databases were enough for the limited amount of data companies stored for their use cases. Over time, however, data amounts exponentially increased, requiring companies to find solutions to keep all of the information that was coming their way. This led to the emergence of new technologies that allowed organizations to deal with large amounts of data like Hadoop, Vertica, and MongoDB. This was the Big Data era in the 2000s when systems were typically distributed SQL or NoSQL.

The Big Data era lasted less than a decade when it was interrupted by the widespread adoption of cloud technologies in the early and mid-2010s. Traditional, on-premise Big Data technologies struggled to move to the cloud. Their higher complexity, cost, and required expertise put them at a disadvantage compared to the more agile cloud data warehouse. This all started in 2010 with Redshift - followed a couple of years later by BigQuery and Snowflake.

The changes that enabled the widespread adoption of the modern data stack

It is important to remember that the modern data stack as we currently know it is a very recent development in data. Only very recent changes in technology allowed companies to exploit the potential of their data fully. Let’s walk through some of the key developments that enabled the adoption of the modern data stack.

The rise of the cloud data warehouse

In 2012, when Amazon’s Redshift was launched, the data warehousing landscape changed forever. All of the other solutions in the market today - like Google BigQuery and Snowflake - followed the revolution set by Amazon. The development of these data warehousing tools is linked to the difference between MPP (Massively Parallel Processing) or OLAP systems like Redshift and OLT systems like PostgreSQL. But we’ll discuss this topic in more detail on our blog focused on data warehousing technologies.

So what changed with the cloud data warehouse?

- Speed level: cloud data warehouses significantly decrease the processing time of SQL queries. Before Redshit, the slowness of calculations was the main obstacle to the at-scale exploitation of data.

- Connectivity: cloud data warehouses connecting data sources to a data warehouse is much easier in the cloud. On top of that, cloud data warehouses manage more formats and data sources than on-premise data warehouses.

- User accessibility: on-premise data warehouses are managed by a central team. This means that there is restricted or indirect access for end-users. On the other hand, cloud data warehouses are accessible and usable by all target users. This naturally has repercussions at the organizational level. Namely, on-premise data warehouses limit the number of requests to save server resources, limiting the potential to fully exploit available data.

- Scalability: the rise of cloud data warehouses has made it possible to make data accessible to an increasing number of people, democratizing companies within organizations.

- Affordability: the pricing models of cloud data warehouses are much more flexible than traditional on-premise solutions - like Informatica and Oracle - because they are based on the volume of data stored and/or the consumed computing resources.

The move from ETL to ELT

In a previous blog, we’ve already talked about the importance of the transition from ETL to ELT. In short, with an ETL process, the data is transformed before being loaded into the data warehouse. On the other hand, with ELT, the unstructured data is loaded into the data warehouse before making any transformations. Data transformation consists of cleaning, checking for duplicates, adapting the data format to the target database, and more. Making all these transformations before the data is loaded into the warehouse allows companies to avoid overloading their databases. This is why traditional data warehouse solutions relied so heavily on ETL. With the rise of the cloud, storage was no longer an issue, and pipeline management’s costs decreased dramatically. This enabled organizations to load all of their data into a database without having to make critical strategic decisions at the extraction and ingestion phase.

Self-service analytics and data democratization

The rise of the cloud data warehouse has not only contributed to the transition from ETL to ELT but also the widespread adoption of BI tools like Power BI, Looker, and Tableau. These easy-to-use solutions allow more and more personas within organizations to access data and make data-driven business decisions.

But is the cloud data warehouse the only thing that matters?

It’s essential to remember that merely having a cloud-based platform does not make a data stack a modern data stack. As Jordan Volz wrote in his blog, many cloud architectures fail to meet the requirements to be included in the category. For him, technologies need to meet five main capabilities to be included in the modern data stack.

- Obviously, it needs to be centered around the cloud data warehouse

- It must be offered as a managed service: with minimal set-up effort and configuration from users

- It democratizes data: the tools are built in such a way to make data accessible for as many people as possible within organizations

- Elastic workloads

- It must have automation as a core competency

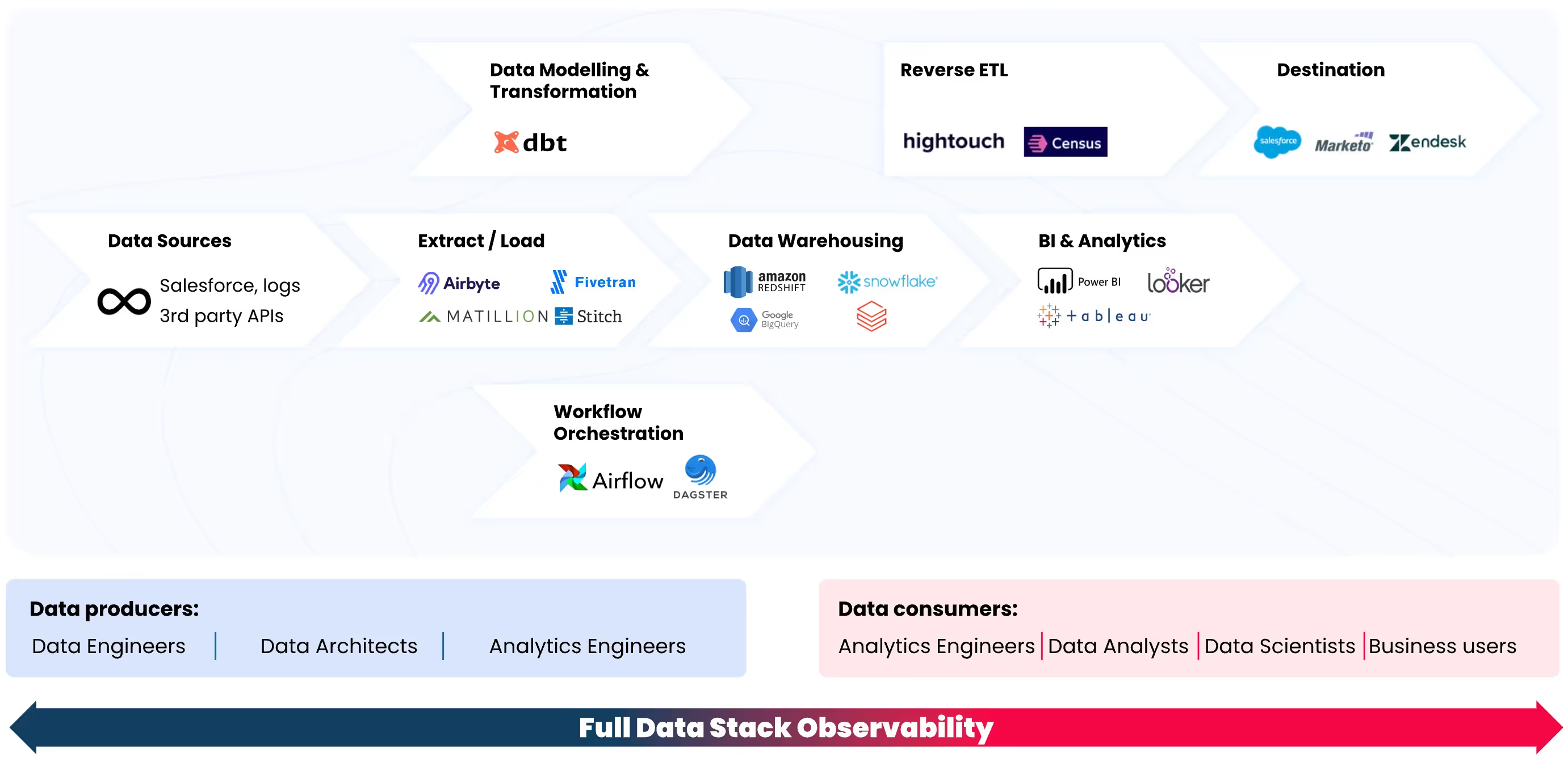

A brief introduction of the main components of the modern data stack

The objective of the modern data stack is to make data more actionable and reduce the time and complexity needed to gain insights from the information in the organization. However, the modern data stack is becoming increasingly complex due to the growing amount of data tools and technologies. Let’s break down some key technologies within the modern data stack. The individual components can vary, but they usually include the following:

Data Integration

Main tools: Airbyte, Fivetran, Stitch

Organizations collect large amounts of data from various systems like databases, CRM systems, application servers, etc. Data integration is the process of extracting and loading the data from these disparate source systems into a single and unified view. Data integration can be defined as the process of sending data from across an enterprise into a centralized system like a data warehouse or a data lake in such a way that it results in a single, unified location for accessing all the information that is flowing through the organization.

Data Transformation/Modelling

Main tools: dbt

Raw data is entirely useless if it doesn’t get structured in such a way that allows organizations to analyze it. This means you need to transform your data before using it to gain insights and make predictions about your business.

Data transformation can be defined as the process of changing the format or structure of data. As we have explained in this blog, data can be transformed at two different stages of the data pipeline. Typically, organizations that make use of on-premise data warehouses utilize an ETL (extract, transform, load) process, in which the transformation happens in the middle - before the data is loaded into the warehouse. However, most organizations today make use of cloud-based warehouses, which makes it possible to increase the storage capacity of the warehouse dramatically - therefore allowing companies to store raw data and transform it when needed. This model is called ELT (extract, load, transform).

Workflow Orchestration

Once you have decided on the transformations, you need to find a way to schedule them so that they run at your preferred frequency. Data orchestration automates the processes related to data ingestion, like bringing the data together from multiple sources, combining it, and preparing it for analysis.

Data Warehousing

Main tools: Snowflake, Firebolt, Google BigQuery, Amazon Redshift

Nothing can reach your data without accessing the warehouse, as it is the one place that connects all the other pieces between each other. So, all your data flows in and out of your data warehouse. This is why we consider it the center of the Modern Data Stack.

Reverse ETL

To put it simply, reverse ETL is the exact inverse process of ETL. Basically, it’s the process of moving data from a warehouse into an external system - like a CRM, an advertising platform, or any other SaaS app - to make the data operational. In other words, reverse ETL allows you to make the data you have in your data warehouse available to your business teams - bridging the gap between the work of data teams and the needs of the final data consumers.

The challenge here is linked to the fact that more and more people are asking for data within organizations. This is why organizations today aim to engage in what is called Operational Analytics, which basically means making the data available to operational teams - like sales, marketing, etc. - for functional use cases. However, the lack of a pipeline moving data directly from the warehouse to the different business applications makes it difficult for business teams to access the cloud data warehouse and make the most out of the available data. The use of the data sitting in the data warehouse is limited to creating dashboards and BI reports. This is where the bridge provided by reverse ETL becomes crucial to use your data entirely.

BI & Analytics

Main tools: Power BI, Looker, Tableau

BI and analytics include the applications, infrastructure, and tools that enable access to the data collected by the organization to optimize business decisions and performance.

Data Observability

We are biased here, but data observability has become an integral part of the modern data stack. For Gartner, data observability has become essential to support and enhance any modern data architecture. As we explain in this blog, data observability is a concept from the DevOps world adapted to the context of data, data pipelines, and platforms. Similar to software observability, data observability uses automation to monitor data quality to identify the potential data issues before they become business issues. In other words, it empowers data engineers to monitor the data and quickly troubleshoot any possible problem.

As organizations deal with more data daily, their data stacks become increasingly complex. At the same time, bad data is not tolerated, making it increasingly critical to have a holistic view of the state of the data pipelines to monitor for loss of quality, performance, or efficiency. On top of this, organizations need to identify data failures before they can propagate, creating insights on what to do next in the case of a data catastrophe.

Data observability can take the following forms:

- Observing data & metadata: this means monitoring the data and its metadata and changes in historic patterns and observing accuracy and completeness.

- Observing data pipelines: this means monitoring any changes in the data pipelines and metadata regarding data volume, frequency, schema, and behavior.

- Observing data infrastructure: this means monitoring and analyzing processing layer logs and operational metadata from query logs.

These different forms of data observability help technical personas like data engineers and analytics engineers, but also business personas like data analysts and business analysts.

Is there a perfect stack?

Now you may ask yourself: is there a perfect data stack that every organization should adopt? The quick answer is no. There is no one-size-fits-all approach when it comes to selecting the best tools and technologies to deal with your data. Every organization has a different level of data maturity, different data teams, different structures, processes, and so on. However, we can categorize modern data stacks into three distinct groups: essential, intermediate, and advanced.

Essential data stack

An essential data stack should be able to ingest data, store it, model it - with dbt or custom SQL - and then, depending on the use case, organizations craft their reverse ETL process for operational analytics or invest in a BI tool. Usually, BI tools in essential data stacks are open source tools like Metabase. On top of that, an essential data stack includes a basic form of testing or data quality monitoring.

Intermediate data stack

An intermediate data stack is a bit more sophisticated, and on top of what we described above, it also includes some form of workflow orchestration and a data observability tool. Basic testing and data quality monitoring allow teams to gain visibility over the data assets' quality status. However, they offer no way of knowing how to troubleshoot potential issues quickly. This is where data observability comes in. Data observability signals across the entire data stack - logs, jobs, datasets, pipelines, BI dashboards, data science models, and more - enabling monitoring and anomaly detection at scale.

Advanced data stack

To conclude, an advanced data stack includes the following technologies and tools:

- Integration: extracting and loading the data from one place to another - with tools like Airbyte, Fivetran, and Segment

- Warehousing: storing all your data in one single place - with tools like Snowflake, Firebolt, Google BigQuery, and Amazon Redshift

- Transformation: turning your data into usable data - with tools like dbt

- Workflow orchestration: bringing the data together from different sources, combining it, and preparing it for analysis - with tools like Airflow and Dagster

- Reverse ETL: moving the data from the warehouse into an external system - with tools like Hightouch and Census

- BI & analytics: analyzing all the information your organization has collected and making data-driven decisions - with tools like Power BI, Looker, and Tableau

- Data observability: ensuring that the data is reliable throughout the entire lifecycle of the data - from ingestion to BI tools. Rather than a component, data observability is an overseeing layer of the modern data stack.

Conclusion

Infrastructures, technologies, processes, and practices have changed at a dramatic speed over the past few years. The rise of the cloud, segregation of cloud and storage, and data democratization impeded the modern data stack movement. Keep in mind that there is no one-size-fits-all approach, and it is hugely use-case specific - depending on factors like the data maturity level of the organization, your data team, and more. Find out more about how we think you should build your modern data team based on your organization’s maturity level on this blog.

Coming in the next blogs: A guide to the current modern data stack ecosystem

The data warehouse is considered by many to be the center of the modern data stack. So, to better describe the different processes and tools required at each stage of the data pipeline, we’ve coined the terms “left-hand side of the warehouse” and “right-hand side of the warehouse”. We basically refer to everything that happens before the data warehouse as the “left-hand side” and what happens after the data warehouse as the “right-hand side”. In the following blogs, we’ll describe what happens on the left-hand side of the modern data stack, starting from the first step: data integration.

The Modern Data Stack is not complete without an overseeing observability layer to monitor data quality throughout the entire data journey. Do you want to learn more about Sifflet’s Full Data Stack Observability approach? Would you like to see it applied to your specific use case? Book a demo or get in touch for a two-week free trial!

%2520copy%2520(3).avif)

-p-500.png)