Choosing between Monte Carlo and Acceldata is a lot like choosing a therapist.

One helps you talk through your dashboard trust issues.

The other wants to dig into your childhood infrastructure problems.

We're putting both platforms on the couch and evaluating them across AI capabilities, root cause analysis, predictive analytics, anomaly detection, and data monitoring.

By the end, you'll know whether you need help fixing symptoms… or whether it's time to confront whatever's been living rent-free in your data ecosystem.

Who Is Monte Carlo?

Monte Carlo's pitch boils down to this: you can scale your stack, your dashboards, and your headcount only if you trust the data holding everything together.

The platform follows a Detection-First philosophy. Rather than ask for defined rules table by table, Monte Carlo gets to work mapping baseline behavior across freshness, volume, schema, and distribution. Then, it alerts you when reality drifts from those patterns.

That ML-first approach shortens setup time and limits the manual configuration typically placed on already-overloaded engineering teams.

Monte Carlo anchors itself at the consumption layer: cloud warehouses and the myriad BI tools downstream. Its premise is straightforward: if you monitor data where people actually use it, you'll spot the issues that matter most.

The platform largely succeeds because its model matches how modern analytics teams operate.

Monte Carlo's Ideal Customer Profile

Monte Carlo delivers the most value in 3 cases:

- Data engineering teams working in a modern cloud stack

- The enterprise seeks fast time-to-value without the burden of heavy customization

- The primary pain point is broken dashboards, unreliable metrics, and silent failures that pass through traditional monitoring and fall on business users

The typical buyer is a mid-to-large enterprise running analytics in Snowflake and Looker, where reliability at the BI layer is non-negotiable and engineering time is a premium.

When an enterprise needs observability without a long runway to get there, Monte Carlo's model fits cleanly.

Who Is Acceldata?

Acceldata comes from a very different school of thought.

Where Monte Carlo focuses on what breaks at the dashboard, Acceldata assumes the real issues start long before a metric goes red.

Its worldview is simple: data doesn't go bad on its own. Something upstream drives the trauma.

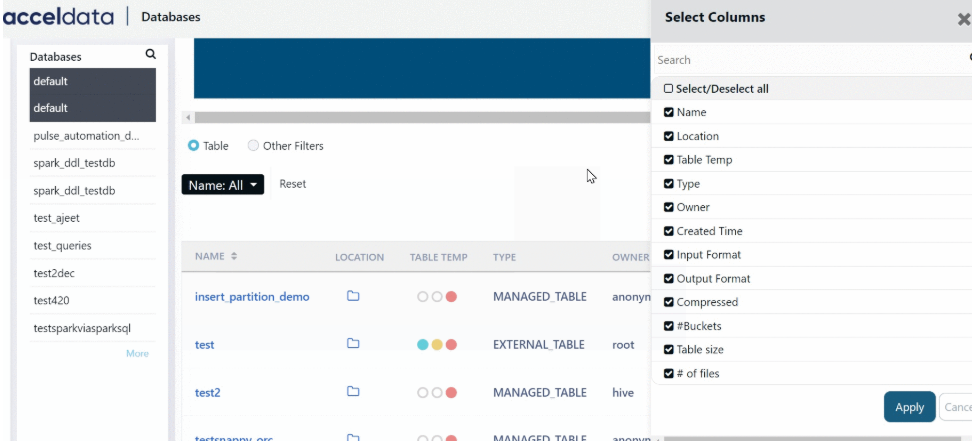

The platform caters to sprawling, multi-layered environments. That means pipelines, compute, storage, streaming systems, cloud warehouses, and the on-prem clusters enterprises still depend on.

Acceldata wants visibility into it all because that's where the root causes usually live.

Its philosophy is Shift-Left and Full-Stack. Catch issues early, and catch them everywhere.

If a Kafka broker is choking or a Hadoop cluster quietly runs out of runway, no amount of downstream testing will save you. Acceldata's architecture surfaces those operational failures before they become data failures.

This model naturally bleeds into FinOps. Acceldata treats cloud spend, resource utilization, and performance degradation as part of the same reliability equation.

For enterprises where cost and quality collide daily, that integrated view becomes part of the platform's appeal.

Acceldata's Ideal Customer Profile

Acceldata delivers the most value when:

- The environment mixes legacy on-prem systems and modern cloud platforms

- Platform engineering teams want visibility into infrastructure, performance monitoring, and spend optimization in addition to data quality

- The enterprise handles high-volume pipelines, reconciliation-heavy migrations, or must meet with strict regulatory requirements

A typical Acceldata customer is a global enterprise: bank, telecom, or large retailer. They're moving petabyte-scale workloads from on-prem into cloud platforms and need visibility across every system involved.

The intros are over, and the diagnosis is next.

Here's how Monte Carlo and Acceldata compare where it counts most.

Monte Carlo vs Acceldata: Head to Head

The first distinction between these platforms lies in how they approach AI. One treats it more or less like a copilot; the other as an operator.

AI and Agentic Observability

Both platforms advertise AI assistance, but their core philosophies mean they present it in markedly different ways.

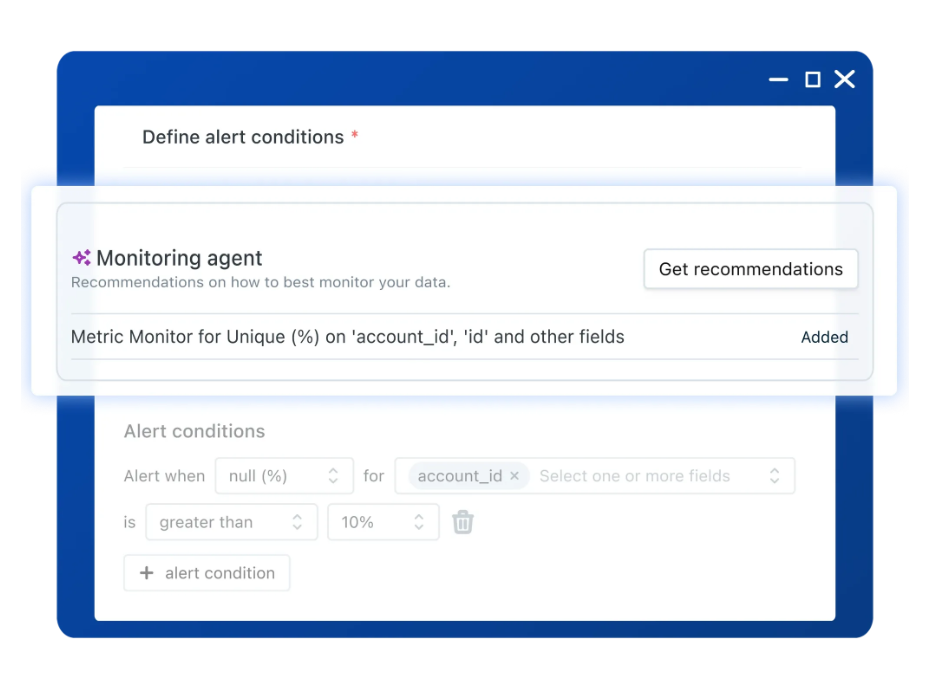

Monte Carlo presents AI as your copilot. When anomalies occur, its Observability Agents generate incident summaries, highlight the SQL queries involved, and recommend rule updates to prevent the same issue from happening again.

The platform's AI assists your manual investigation but doesn't manage or handle the workflow. It acts as an assistive layer that shortens triage time, but only coaches you on the repair.

For example, when an alert fires, the agent suggests queries and rule updates to help engineers investigate. The platform aids and assists, but the legwork is essentially yours.

Acceldata's AI Approach

Acceldata's AI features are more of an operator. Its Agentic Data Management model plans and executes tasks with limited human intervention. The platform can isolate bad data, quarantine compromised batches, and trigger remediation jobs all on its own initiative.

Human oversight still exists, but it seeks to handle the mechanical work on its own.

Case in point: the same anomaly appears. But now, Acceldata's agent quarantines the batch. It reruns the pipeline, notifies the owners, and logs the incident all on its own.

It's not hard to see the value in a workflow that identifies issues and fixes them before anyone gets involved.

Final verdict: Acceldata stands out for its autonomous, self-healing workflows.

Acceldata - 10 pts.

Monte Carlo - 7 pts.

Lineage and Root Cause Analysis

Here, the platforms create a familiar dilemma. Both offer lineage and RCA, but they're diagnosing different layers of the same incident.

- Acceldata's Root-level Focus

Acceldata connects lineage to infrastructure-level root causes.

It goes well beyond explaining which tables are affected to explaining why a pipeline failed.

Whether that's a memory issue on a Kafka node or a suspended Snowflake warehouse, this correlation is invaluable to platform teams investigating systemic failures.

- Monte Carlo's Visual Approach

Highly visual, field-level lineage is Monte Carlo's calling card.

It inspects SQL queries running across the warehouse to pinpoint which columns, tables, and transformations are feeding downstream dashboards.

When a metric breaks, a fast, intuitive answer is presented to the most essential question: What changed?

Monte Carlo's visual model offers the kind of illustrated explanations that analysts and engineers, those closest to the BI layer, need. Clarity more than complexity.

Final verdict: We lean toward Monte Carlo for data dependency visibility. But we also lean Acceldata to link data failures to operational root causes. Tie ball game.

Acceldata - 8 pts.

Monte Carlo - 8 pts.

Predictive Analytics

Predictive analytics is where platforms stop merely reacting to incidents and start trying to anticipate them. But what they choose to predict says everything about their priorities.

- Monte Carlo's Predictive Model

Monte Carlo studies baseline data behavior.

Once it locks it in, it will flag anything outside that norm. Mistimed loads and off-pattern volume spikes, for instance, are treated as anomalies that are alerted to and warrant investigation.

The platform's objective is simple: warn you before something breaks downstream, not after the CEO is staring at an empty chart.

- Acceldata's Predictive Model

Acceldata has grander ideas.

Beyond predicting what your data might do, the platform also forecasts what your systems are contemplating.

Running out of storage. Busting your cloud spend budget. Overloading compute resources.

If a cluster is about to hit a limit or a warehouse is blowing through its monthly spend, Acceldata makes sure you're the first to know.

Final verdict: Acceldata's expanded predictive models speak to both operational stability and cloud economics. There's real value in that for enterprises where cost and quality are two sides of the same coin.

Acceldata - 10

Monte Carlo - 7

Anomaly Detection

Anomaly detection is where Monte Carlo's heritage shows most clearly. This is the part of the session where one platform reacts instinctively, and the other pauses to ask a few more questions.

Monte Carlo's Advantage:

Monte Carlo is the industry's benchmark for zero-config anomaly detection. Once hooked up to your warehouse, it starts flagging data quality issues and anomalies at a comparatively fast pace.

Its detection engine is tuned right out of the box. It requires minimal supervision and generally behaves well from day one.

That's why Monte Carlo often wins in fast-deployment environments. The value curve is immediate, and the false-positive curve is manageable.

Acceldata's Approach

Acceldata provides ML-driven anomaly detection, too, but expects a little more hand-holding around enterprise-specific rules and operational tolerances.

In complex enterprise environments, this extra effort could be seen as advantageous. But it does extend the period before the signal becomes relatively trustworthy.

Final verdict: Who likes to wait? Monte Carlo wins here.

Monte Carlo - 10 pts.

Acceldata - 7 pts.

Data Monitoring

Monitoring is where each platform's subconscious priorities show up. What they watch—and what they don't—says more than the marketing copy does.

Data monitoring is that place.

Acceldata’s Approach

Acceldata treats monitoring as a full-contact sport. It doesn't just watch metadata; it inspects the contents, compares sources, validates targets, and reconciles entire systems with one another.

Data in motion, data at rest, data mid-migration; Acceldata wants eyes on all of it.

Automated reconciliation workflows, cross-system accuracy checks, and pipeline-wide validation give it the depth enterprises typically need when accuracy has legal, financial, or compliance consequences.

Monte Carlo's Approach

Monte Carlo keeps its monitoring focused squarely on the metadata layer.

It works fine for enterprises wanting broad coverage without a lot of ceremony. But when deeper validation is needed, the burden shifts back to the engineers to code and maintain those checks.

Monte Carlo catches the big signals with ease, but for the heavy-lifts?

That's still you.

Final verdict: Acceldata takes this category for treating data monitoring as a full-stack commitment.

Acceldata - 10

Monte Carlo - 7

Final Verdict & Scoring

Total Score:

Monte Carlo — 39 / 50

Acceldata — 45 / 50

Winner: Acceldata.

Our feature-by-feature breakdown highlights a clear pattern: both platforms offer value, but they solve different problems by approaching observability through distinct perspectives.

But all scores aside, the right choice is more about your system and teams than any SaaS platform psychoanalysis we might do.

Think Monte Carlo if…

- Your stack consists mainly of modern cloud analytics

- Your primary concern is data downtime

- You don't want to write rules or tune thresholds

- You prioritize easy and fast over deep customization

Or Acceldata if…

- You run a hybrid or multi-system environment

- You need infrastructure and cost visibility just as much as data quality.

- You're managing large-scale migrations

- You operate at petabyte scale, with streaming pipelines or regulatory constraints across multiple systems.

Is There a Better Choice?

Both Monte Carlo and Acceldata have strong opinions about how observability should work.

But you shouldn't have to choose which part of the stack gets the therapy it deserves.

Sifflet pulls data quality, lineage, reliability, and AI-driven investigation into one comprehensive approach to data observability.

It doesn't ask whether the root cause lives downstream or upstream.

It assumes every layer is capable of acting out.

If you want an end-to-end data observability platform that treats the whole patient, not just the symptoms or the trauma, start with Sifflet.

-p-500.png)